Risk Management in the Digital World - A Chore or A Necessity?

Written by Ioannis Ntokos*

“Nothing in life is certain but death and taxes,” Benjamin Franklin (or someone before him) once said. But the phrase could well include another component, risk. “Death, taxes, and risk.” In the digital world, risks are a constant that we must take into account, whether as citizens, product or service providers, or as experts in the field of risk management. Let’s see how proper risk management can provide certainty and security in the digital space.

What does risk mean, and why does it deserve attention?

The digital world changes rapidly, every day. The concept of risk, however, is relatively static: every system, every program, every person using technology creates an “opening,” a vulnerability. These openings are not dangerous in themselves, but they are vulnerable to threats that can exploit them. Consider, for example, a flaw in a computer system at a nuclear power plant, a flawed process for accessing sensitive data, a bad setup of a network switch. The alarm bells are ringing.

The risk is there before you do anything, it is “inherent.” It is there by default, without any protective measures being taken. When you ride a bike, the very act of riding is a risk. In the digital world, the inherent risk arises from things like human carelessness, the complexity of systems, or the value of data shared with others. Risk itself is a certainty, a constant of life. That does not mean we ignore it.

So far, so good. The fact that I cross the threshold of my door every morning is a dangerous situation, theoretically. What is the point of action if the risk is there anyway? The next stage is to identify which risks require attention and action. This requires cold observation and logical thinking. Some risks are more significant than others, so they must go through the risk management "filter".

Calculating Risk

In its simplest form, risk (numerical or not) is simply a function of probability and impact. A given risk has (negative) consequences (impact) with some frequency (probability). Being able to quantify the variables of probability and impact in a quantitative way (using precise and detailed numbers, usually monetary for impact and annual occurrence units for probability) or qualitative way (using more arbitrary calculations, usually using a scale from 1 to 5) brings us closer to calculating risk.

In the example of cycling, a risk is my sudden encounter with a brown bear (and the unpleasant consequences that might follow). The likelihood of this happening varies depending on the situation - if I am riding my bike in an area of Korydallos, the chances of encountering a bear are close to zero. If I have gone cycling in the Pindos mountains, the situation changes dramatically. The impact of the encounter with the bear also changes. If I carry bear spray or have watched many videos on how to deal with a brown bear (the author of this article has watched quite a few such videos), I may escape with bruises and scratches (or a broken bicycle). If I lack knowledge and tools, things become more difficult.

Here the importance of protective measures and the transition from inherent to residual risk also becomes apparent. Through the protective measures at my disposal (spray), I can lower the impact of the encounter from certain death to admission to the hospital for stitches. Residual risk is the risk that remains after we take protective measures against it! Protective measures are an integral part of risk management.

Risk Management Methods

There are four appropriate ways to deal with a risk, once it has been perceived (and quantified or quantified). These options are: acceptance, transfer, reduction or elimination.

Acceptance means that you understand the risk and hold on to it, not passively or ignorantly, but rationally. Some risks are so small that it costs more to deal with them than to accept them. If I ride my bike downtown, I accept the infinitesimal chance (0.00001%) that a bear will attack me, and I enjoy my ride.

Transfer is the assignment of risk to someone else (usually through insurance). The risk does not disappear, it simply changes hands. The responsibility remains with the person subject to the risk, but there is coverage in case of damage due to the risk. In the bear scenario, I hope my insurance covers such attacks, or at least my family receives a lump sum (through my life insurance) in case the spray doesn’t help.

Speaking of spray, this is a risk reduction method! Reduction means that you limit the likelihood or impact, and it is the most common method of dealing with risks. This includes any form of preventive protection. Every protective measure I take aims to reduce the risk. If I’m out cycling in the Pindus Mountains with 10 other friends, the chances of the bear attacking me instead of one of them are drastically reduced!

Elimination is the most absolute option, as you move away from the risk and its source. Elimination is the final cleanup: the recognition that something is beyond “patching.” Are there many hungry bears on the mountain I’m planning to visit? I choose the sea instead of the mountain and I have peace of mind!

While the above ways of dealing with risk are all tried and tested, there is one reaction that is not legitimate - risk ignorance. Knowing the risk and consciously choosing to ignore it will inevitably lead to negative results!

Dealing with risk in the digital world

Risks similar to a random bear encounter exist in the digital and online space, only instead of hungry four-legged friends, we encounter hackers, abused platforms, the use of artificial intelligence that violates human rights and defective hardware. And with the same logic as our trip to the forest, these risks require special treatment, taking into account the following basic principles:

- Risk management is not a single event, but a cycle. You identify, assess, act, and regularly review. The digital world is constantly changing, which means that the risk landscape is also changing. What was secure in the morning may be vulnerable by evening. Technology waits for no one - and the associated risks must be constantly recorded and addressed.

- A holistic approach to risk is crucial. One gap is enough to cause radical damage to citizens, users, and businesses. Partial protection creates a false sense of security. In the digital space, the weak point is often not the most obvious. It can be the forgotten file, the inadequate password, the external partner using a fragile application. Therefore, a holistic view is required.

- It is also necessary to understand that risk is not only technical, but also organizational, human, or procedural. In practice, most damage results from mistakes, omissions, or misunderstandings. Technology simply exacerbates the consequences. Therefore, it is necessary to address it from many different angles.

- Awareness and education on information and data protection issues are key to reducing risks. No matter how organized you are, there will always be someone who will write their passwords in plain sight, open the wrong file, or accidentally press “delete.” The human element cannot be eliminated.

- Prevention is always cheaper than recovery. For the average user, risk management may seem like a chore, but the reality is that the world of technology has grown so much that ignorance of risk is costly. Just as no one waits to install an alarm system after a break-in, risk management works best before bad things happen.

The essence of risk management is targeted clarity: although absolute security is not possible, we strive for stability while trying to avoid major mistakes. When you understand this, risk management ceases to be a burden. It becomes an organized and coordinated effort, and then a habit. A kind of mental exercise where you ask: “What could go wrong? How much do I care? What do I do about it?” Not as an exercise in fear, but as an exercise in pure reasoning and protection. Risk will always be there. Managing it is a conscious choice, and awareness is a tool.

*Ioannis Ntokos is an IT risk management, information security and third party risk management specialist, with expertise in data protection. He specializes in ISO27001, NIST, NIS2 and the General Data Protection Regulation (GDPR). In his spare time, he offers career advice on IT governance, risk and compliance through his YouTube channel

European Digital Identity Wallet (EUDI Wallet): The Uncomfortable Truth Behind the Innovation

By Giannis Konstantinidis*

Note: This is the English translation of the original article which was written in Greek.

A critical look at the EU’s new “wallet” and the hidden risks it poses to personal data protection and privacy in the digital age.

What are digital identities?

Digital identities are the set of information (e.g. name, professional status, address, telephone number, password) that characterise us when we use digital services on the Internet. In simple words, they are our “digital selves” when we connect to social networking platforms, e-government services, e-banking systems, etc. In practice, digital identities contain personal data which in many cases are also sensitive (e.g. in the case of e-health services). Therefore, digital identities must enable fast and trouble-free access to digital services and meanwhile be accompanied by strict safeguards regarding information security and data protection.

How have digital identities evolved?

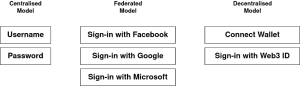

There are three main models for organising digital identities (Figure 1). In the centralised model, an organisation maintains a central database with the digital identities of all users. A key disadvantage of the centralised model is that users must maintain a separate account for each digital service they use. In contrast, in the federated model, organisations cooperate with each other and exchange digital identity information using a common protocol. For example, if a user has an account with a central provider (e.g. Facebook, Google, Microsoft or gov.gr), then they can log-in to another compatible digital service with the same information. Therefore, the federated model is quite easy to use, although the overall management of digital identities and their attributes is carried out by a few providers (who might be aware of the users’ activities).

Figure 1: In the centralised model, users have separate accounts (and passwords) for each service they use. In contrast, in the federated model, there are a few providers that act as single “gateways” to services. Finally, in the decentralised model, users use their digital wallets and ideally choose the specific information they want to share with service providers. (Creator Giannis Konstantinidis)

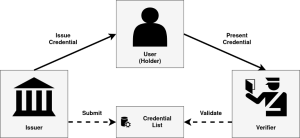

Therefore, in both the centralised and the federated model, a major concern is the concentration of a large amount of data in central locations that are considered attractive to malicious attackers (see massive data breaches). As an “antidote”, the decentralised model has been proposed, which is often associated with the concept of “self-sovereign identity” (SSI). In this model, the user controls all the identity elements that are to be used by the services. Instead of relying upon a few providers, each user holds a set of “credentials”, which have been issued by trusted entities, and maintains them in an application called a digital wallet or digital identity wallet. When the user needs to prove something (e.g. their age), the digital credential is directly presented (signed beforehand by a trusted organisation that serves as the issuer). Ideally, the presentation of that digital credential reflects the minimum amount of the required data and does not reveal the entire personal data of the user.

Figure 2: In the decentralised model, the issuer generates and delivers a credential to the user (holder) who stores it in the digital wallet. The user then presents the credential to a verifier, i.e. an organisation that requests confirmation of the user’s identity and/or status. The validity of the credential is verified based on the information found in a registry.(Creator Giannis Konstantinidis)

What is happening in the EU with digital identities?

With the revision of the eIDAS Regulation (2024/1183), the European Commission has established that each EU Member-State must offer its citizens a digital identity wallet. This will be an application for mobile devices in which each user will be able to store documents in digital form (e.g. ID cards, driving licenses, educational qualifications, social security documents and other travel documents). The user's interaction with the respective services will be done through the wallet, i.e. the user will select the credentials they wish to share. As mentioned earlier, the entire documents are not sent by each user, but a selected presentation of certain data in combination with the appropriate digital evidence that cryptographically proves the validity of those documents. Admittedly, the original vision of a “sovereign identity” seems to be significantly limited in the current design of the EUDI Wallet. In particular, the draft architecture (i.e. Architecture and Reference Framework - ARF) foresees the use of a traditional Public Key Infrastructure (PKI). Simply put, instead of leveraging a fully decentralised system, the European Commission chooses to leverage existing infrastructures that collect digital identity data. As such, it is more of a cross-border federated model in which users are responsible for managing and sharing their credentials on their own, rather than a fully decentralised model.

Is the protection of privacy and personal data enhanced or undermined?

Based on current developments, several risks related to data protection and privacy arise. First, the wallet can generate unique identifiers for each user (although theoretically this is necessary to identify the user when accessing cross-border services in the EU) and several experts express fear that these identifiers will allow for the continuous monitoring and correlation of all user activities.

In particular, according to the position of a group of distinguished academics (specialists in cryptography), the proposed architecture does not include sufficient technical measures to limit the “observability” and prevent the “linkability” of user activities. This means that even if user activities are carried out through the use of pseudonyms, there is no special care to prevent service providers from collecting usage patterns and correlating them with each other. So, in practice, this gap allows the tracing of user activities. The latest version of the architecture (ARF 2.3.0) recognises these risks, however, the integration of appropriate mechanisms remains at the level of discussion and has not yet been implemented (due to complexity and certain technical limitations). The European Telecommunications Standards Institute (ETSI) recognises the importance of technical measures, such as zero-knowledge proofs (ZKPs), but it is shown that (for the time being) the complete elimination of tracking is not feasible due to the technical complexity and lack of interoperability.

Regarding the overall “flexibility” and accountability of the ecosystem, there are also several negative comments. For example, if a service decides to request more data than necessary, there is no mechanism for prevention or even control. At the same time, it is considered that a huge share of responsibility will be shifted to users, because they will be constantly asked to approve the credentials that will be shared with service providers. In fact, if something goes wrong (e.g. in the event of theft of the user’s device or electronic fraud), there are no sufficient protection measures and therefore the user bears a large share of the responsibilities. In fact, there is no provision (so far) for any kind of recovery or restoration process.

Finally, an additional concern relates to the expansion of the wallet's functionalities, as it is going to gradually collect all kinds of electronic documents and certificates (e.g. even travel credentials and electronic payment details). Thus, the risk of a "surveillance dossier" emerges, where a malicious analyst or attacker could discover an extensive set of information about a person through a single medium.

Towards a cautious acceptance or questioning of the framework?

Although the EUDI Wallet is an important step in the development of modern digital services on the Internet, it comes with several challenges. If citizens are to trust such a technological solution, they must do so with full awareness of the advantages as well as the potential risks involved. At the same time, experts must further develop and document the mechanisms that contribute to security and privacy, otherwise we risk moving from a “wallet that empowers users to protect their data” to a “wallet that exposes data arbitrarily”. Finally, the contribution of experts and civil society organisations is extremely important, as gaps and possible omissions can be identified and corrected before the final implementation.

*Giannis Konstantinidis (CISSP, CIPM, CIPP/E, ISO/IEC 27001 & 27701 Lead Implementer) is a cybersecurity consultant and member of Homo Digitalis since 2019.

Digital Services Act: Striking a Balance Between Online Safety and Free Expression

By Anastasios Arampatzis

The European Union’s Digital Services Act (DSA) stands as a landmark effort to bring greater order to the often chaotic realm of the internet. This sweeping legislation aims to establish clear rules and responsibilities for online platforms, addressing a range of concerns from consumer protection to combatting harmful content. Yet, within the DSA’s well-intentioned provisions lies a fundamental tension that continues to challenge democracies worldwide: how do we ensure a safer, more civil online environment without infringing on the essential liberties of free expression?

This blog delves into the complexities surrounding the DSA’s provisions on chat and content control. We’ll explore how the fight against online harms, including the spread of misinformation and deepfakes, must be carefully weighed against the dangers of censorship and the chilling of legitimate speech. It’s a balancing act with far-reaching consequences for the future of our digital society.

Online Harms and the DSA’s Response

The digital realm, for all its promise of connection and knowledge, has become a breeding ground for a wide range of online harms. Misinformation and disinformation campaigns erode trust and sow division, while hate speech fuels discrimination and violence against marginalized groups. Cyberbullying shatters lives, particularly those of vulnerable young people. The DSA acknowledges these dangers and seeks to address them head-on.

The DSA places new obligations on online platforms, particularly Very Large Online Platforms (VLOPs) with a significant reach. These requirements include:

- Increased transparency: Platforms must explain how their algorithms work and the criteria they use for recommending and moderating content.

- Accountability: Companies will face potential fines and sanctions for failing to properly tackle illegal and harmful content.

- Content moderation: Platforms must outline clear policies for content removal and implement effective, user-friendly systems for reporting problematic content.

The goal of these DSA provisions is to create a more responsible digital ecosystem where harmful content is less likely to flourish and where users have greater tools to protect themselves.

The Censorship Concern

While the DSA’s intentions are admirable, its measures to combat online harms raise legitimate concerns about censorship and the potential suppression of free speech. History is riddled with instances where the fight against harmful content has served as a pretext to silence dissenting voices, critique those in power, or suppress marginalized groups.

Civil society organizations have stressed the need for DSA to include clear safeguards to prevent its well-meaning provisions from becoming tools of censorship. It’s essential to have precise definitions of “illegal” or “harmful” content – those that directly incite violence or break existing laws. Overly broad definitions risk encompassing satire, political dissent, and artistic expression, which are all protected forms of speech.

Suppressing these forms of speech under the guise of safety can have a chilling effect, discouraging creativity, innovation, and the open exchange of ideas vital to a healthy democracy. It’s important to remember that what offends one person might be deeply important to another. The DSA must tread carefully to avoid empowering governments or platforms to unilaterally decide what constitutes acceptable discourse.

Deepfakes and the Fight Against Misinformation

Deepfakes, synthetic media manipulated to misrepresent reality, pose a particularly insidious threat to the integrity of information. Their ability to make it appear as if someone said or did something they never did has the potential to ruin reputations, undermine trust in institutions, and even destabilize political processes.

The DSA rightfully recognizes the danger of deepfakes and places an obligation on platforms to make efforts to combat their harmful use. However, this is a complex area where the line between harmful manipulation and legitimate uses can become blurred. Deepfake technology can also be harnessed for satire, parody, or artistic purposes.

The challenge for the DSA lies in identifying deepfakes created with malicious intent while protecting those generated for legitimate forms of expression. Platforms will likely need to develop a combination of technological detection tools and human review mechanisms to make these distinctions effectively.

The Responsibility of Tech Giants

When it comes to spreading harmful content and the potential for online censorship, a large portion of the responsibility falls squarely on the shoulders of major online platforms. These tech giants play a central role in shaping the content we see and how we interact online.

The DSA directly addresses this immense power by imposing stricter requirements on the largest platforms, those deemed Very Large Online Platforms. These requirements are designed to promote greater accountability and push these platforms to take a more active role in curbing harmful content.

A key element of the DSA is the push for transparency. Platforms will be required to provide detailed explanations of their content moderation practices, including the algorithms used to filter and recommend content. This increased visibility aims to prevent arbitrary or biased decision-making and offers users greater insight into the mechanisms governing their online experiences.

Protecting Free Speech – Where do We Draw the Line?

The protection of free speech is a bedrock principle of any democratic society. It allows for the robust exchange of ideas, challenges to authority, and provides a voice for those on the margins. Yet, as the digital world has evolved, the boundaries of free speech have become increasingly contested.

The DSA represents an honest attempt to navigate this complex terrain, but it’s vital to recognize that there are no easy answers. The line between harmful content and protected forms of expression is often difficult to discern. The DSA’s implementation must include strong safeguards informed by fundamental human rights principles to ensure space for diverse opinions and critique.

In this effort, we should prioritize empowering users. Investing in media literacy education and promoting tools for critical thinking are essential in helping individuals become more discerning consumers of online information.

Conclusion

The Digital Services Act signals an important turning point in regulating the online world. The struggle to balance online safety and freedom of expression is far from over. The DSA provides a strong foundation but needs to be seen as a step in an ongoing process, not a final solution. To ensure a truly open, democratic, and safe internet, we need continuing vigilance, robust debate, and the active participation of both individuals and civil society.

The Looming Disinformation Crisis: How AI is Weaponizing Misinformation in the Age of Elections

By Anastasios Arampatzis

Misinformation is as old as politics itself. From forged pamphlets to biased newspapers, those seeking power have always manipulated information. Today, a technological revolution threatens to take disinformation to unprecedented levels. Generative AI tools, capable of producing deceptive text, images, and videos, give those who seek to mislead an unprecedented arsenal. In 2024, as a record number of nations hold elections, including the EU Parliamentary elections in June, the very foundations of our democracies tremble as deepfakes and tailored propaganda threaten to drown out truth.

Misinformation in the Digital Age

In the era of endless scrolling and instant updates, misinformation spreads like wildfire on social media. It’s not just about intentionally fabricated lies; it’s the half-truths, rumors, and misleading content that gain momentum, shaping our perceptions and sometimes leading to real-world consequences.

Think of misinformation as a distorted funhouse mirror. Misinformation is false or misleading information presented as fact, regardless of whether there’s an intent to deceive. It can be a catchy meme with a dubious source, a misquoted scientific finding, or a cleverly edited video that feeds a specific narrative. Unlike disinformation, which is a deliberate spread of falsehoods, misinformation can creep into our news feeds even when shared with good intentions.

How the Algorithms Push the Problem

Social media platforms are driven by algorithms designed to keep us engaged. They prioritize content that triggers strong emotions – outrage, fear, or click-bait-worthy sensationalism. Unfortunately, the truth is often less exciting than emotionally charged misinformation. These algorithms don’t discriminate based on accuracy; they fuel virality. With every thoughtless share or angry comment, we further amplify misleading content.

The Psychology of Persuasion

It’s easy to blame technology, but the truth is we humans are wired in ways that make us susceptible to misinformation. Here’s why:

- Confirmation Bias: We tend to favor information that confirms what we already believe, even if it’s flimsy. If something aligns with our worldview, we’re less likely to question its validity.

- Lack of Critical Thinking: In a fast-paced digital world, many of us lack the time or skills to fact-check every claim we encounter. Pausing to assess the credibility of a source or the logic of an argument is not always our default setting.

How Generative AI Changes the Game

Generative AI models learn from massive datasets, enabling them to produce content indistinguishable from human-created work. Here’s how this technology complicates the misinformation landscape:

- Deepfakes: AI-generated videos can convincingly place people in situations they never were or make them say things they never did. This makes it easier to manufacture compromising or inflammatory “evidence” to manipulate public opinion.

- Synthetic Text: AI tools can churn out large amounts of misleading text, like fake news articles or social media posts designed to sound authentic. This can overwhelm fact-checking efforts.

- Cheap and Easy Misinformation: The barrier to creating convincing misinformation keeps getting lower. Bad actors don’t need sophisticated technical skills; simple AI tools can amplify their efforts.

The Dangers of Misinformation

The impact of misinformation goes well beyond hurt feelings. It can:

- Pollute Public Discourse: Misinformation hinders informed debate. It leads to misunderstandings about important issues and makes finding consensus difficult.

- Erode Trust: When we can’t agree on basic facts, trust in institutions, science, and even the democratic process breaks down.

- Targeted Manipulation: AI tools can allow for highly personalized misinformation campaigns that prey on specific vulnerabilities or biases of individuals and groups.

- Influence Decisions: Misinformation can influence personal decisions, including voting for less qualified candidates or promoting radical agendas.

What Can Be Done?

There is no single, easy answer for combating the spread of misinformation. Disinformation thrives in a complicated web of human psychology, technological loopholes, and political agendas. However, recognizing these challenges is the first step toward building effective solutions. Here are some crucial areas to focus on:

- Boosting Tech Literacy: In a digital world, the ability to distinguish reliable sources from questionable ones is paramount. Educational campaigns, workshops, and accessible online resources should aim to teach the public how to spot red flags for fake news: sensational headlines, unverified sources, poorly constructed websites, or emotionally charged language.

- Investing in Fact-Checking: Supporting independent fact-checking organizations is key. These act as vital watchdogs, scrutinizing news, politicians’ claims, and viral content. Media outlets should consider prominently labeling content that has been verified or clearly marking potentially misleading information.

- Balancing Responsibility & Freedom: Social media companies and search engines bear significant responsibility for curbing the flow of misinformation. The EU’s Digital Services Act (DSA) underscores this responsibility, placing requirements on platforms to tackle harmful content. However, this is a delicate area, as heavy-handed censorship can undermine free speech. Strategies such as demoting unreliable sources, partnering with fact-checkers, and providing context about suspicious content can help, but finding the right balance is an ongoing struggle, even in the context of evolving regulations like the DSA.

- The Importance of Personal Accountability: Even with institutional changes, individuals play a vital role. It’s essential to be skeptical, ask questions about where information originates, and be mindful of the emotional reactions a piece of content stirs up. Before sharing anything, verify it with a reliable source. Pausing and thinking critically can break the cycle of disinformation.

The fight against misinformation is a marathon, not a sprint. As technology evolves, so too must our strategies. We must remain vigilant to protect free speech while safeguarding the truth.

From Clean Monday to Cyber Cleanliness: Bridging Traditions with Modern Cyber Hygiene Practices

By Anastasios Arampatzis and Ioannis Vassilakis

In the heart of Greek tradition lies Clean Monday, which marks the beginning of Lent leading to Easter and symbolizes a fresh start, encouraging cleanliness, renewal, and preparation for the season ahead. This day, celebrated with kite flying, outdoor activities, and cleansing the soul, carries profound significance in purifying one’s life in all aspects.

Just as Clean Monday invites us to declutter our homes and minds, there exists a parallel in the digital realm that often goes overlooked: cyber hygiene. Maintaining a clean and secure online presence is imperative in an era where our lives are intertwined with the digital world more than ever.

Understanding Cyber Hygiene

Cyber hygiene refers to the practices and steps that individuals take to maintain system health and improve online security. These practices are akin to personal hygiene routines; just as regular handwashing can prevent the spread of illness, everyday cyber hygiene practices can protect against cyber threats such as malware, phishing, and identity theft.

The importance of cyber hygiene cannot be overstated. In today’s interconnected world, a single vulnerability can lead to a cascade of negative consequences, affecting not just the individual but also organizations and even national security. The consequences of neglecting cyber hygiene can be severe:

- Data breaches.

- Identity theft.

- Loss of privacy.

As we celebrate Clean Monday and its cleansing rituals, we should also adopt cyber hygiene practices to prepare for a secure and private digital future free from cyber threats.

Clean Desk and Desktop Policies – The Foundation of Cyber Cleanliness

Just as Clean Monday encourages us to purge our homes of unnecessary clutter, a clean desk and desktop policy is the cornerstone of maintaining a secure and efficient workspace, both physically and digitally. These policies are not just about keeping a tidy desk; they’re about safeguarding sensitive information from prying eyes and ensuring that critical data isn’t lost amidst digital clutter.

- Clean Desk Policy ensures that sensitive documents, notes, and removable storage devices are secured when not in use or when an employee leaves their desk. It’s about minimizing the risk of sensitive information falling into the wrong hands, intentionally or accidentally.

- Clean Desktop Policy focuses on the digital landscape, advocating for a well-organized computer desktop. This means regularly archiving or deleting unused files, managing icons, and ensuring that sensitive information is not exposed through screen savers or unattended open documents.

The benefits of these policies are profound:

- Reduced risk of information theft.

- Increased efficiency and enhanced productivity.

- Enhanced professional image and competence.

The following simple tips can help you maintain cleanliness:

- Implement a Routine: Just as the rituals of Clean Monday are ingrained in our culture, incorporate regular clean-up routines for physical and digital workspaces.

- Secure Sensitive Information: Use locked cabinets for physical documents and password-protected folders for digital files.

- Adopt Minimalism: Keep only what you need on your desk and desktop. Archive or delete old files and dispose of unnecessary paperwork.

Navigating the Digital Landscape: Ad Blockers and Cookie Banners

Using ad blockers and understanding cookie banners are essential for maintaining a clean and secure online browsing experience. As we carefully select what to keep in our homes, we must also choose what to allow into our digital spaces.

- Ad Blockers prevent advertisements from being displayed on websites. While ads can be a source of information and revenue for site owners, they can also be intrusive, slow down web browsing, and sometimes serve as a vector for malware.

- Cookie Banners inform users about a website’s use of cookies. Understanding and managing these consents can significantly enhance your online privacy and security.

To achieve a cleaner browsing experience:

- Choose reputable ad-blocking software that balances effectiveness with respect for websites’ revenue models. Some ad blockers allow non-intrusive ads to support websites while blocking harmful content.

- Take the time to read and understand what you consent to when you agree to a website’s cookie policy. Opt for settings that minimize tracking and personal data collection where possible.

- Regularly review and clean up your browser’s permissions and stored cookies to ensure your online environment remains clutter-free and secure.

Cultivating Caution in Digital Interactions

In the same way that Clean Monday prompts us to approach our physical and spiritual activities with mindfulness and care, we must also navigate our digital interactions with caution and deliberateness. While brimming with information and connectivity, the digital world also harbors risks such as phishing scams, malware, and data breaches.

- Verify Before You Click: Ensure the authenticity of websites before entering sensitive information, and be skeptical of emails or messages from unknown sources.

- Use BCC in Emails When Appropriate: Sending emails, especially to multiple recipients, should be handled carefully to protect everyone’s privacy. Using Blind Carbon Copy (BCC) ensures that recipients’ email addresses are not exposed to everyone on the list.

- Recognize and Avoid Phishing Attempts: Phishing emails are the digital equivalent of wolves in sheep’s clothing, often masquerading as legitimate requests. Learning to recognize these attempts can protect you from giving away sensitive information to the wrong hands.

- Embrace skepticism in your online interactions: Ask yourself whether information shared is necessary, whether links are safe to click, and whether personal data needs to be disclosed.

Implementing a Personal Cyber Cleanliness Routine

Drawing inspiration from the rituals of Clean Monday, establishing a personal routine for cyber cleanliness is beneficial and essential for maintaining digital well-being. The following steps can help show a cleaner digital life.

- Enable Multi-Factor Authentication (MFA) wherever it is possible to keep unauthorized users out of personal accounts.

- Periodically review privacy settings on social media and other online platforms to ensure you only share what you intend to.

- Unsubscribe from unused services, delete old emails and remove unnecessary files to reduce the cognitive load and make it easier to focus on what’s important.

- Just as Clean Monday marks a time for physical and spiritual cleansing, set specific times throughout the year for digital clean-ups.

- Keep abreast of the latest in cybersecurity to ensure your practices are up-to-date. Knowledge is power, particularly when it comes to protecting yourself online.

- Share your knowledge and habits with friends, family, and colleagues. Just as traditions like Clean Monday are passed down, so too can habits of cyber cleanliness.

Embracing a Future of Digital Cleanliness and Renewal

The principles of Clean Monday can also be applied to our digital lives. Maintaining a healthy, secure digital environment is a continuous commitment and requires regular maintenance. We take proactive steps toward securing our personal and professional data by implementing clean desk and desktop policies, navigating the digital landscape with caution, and cultivating a routine of personal cyber cleanliness. Let us embrace this opportunity for a digital clean-up and create a safer digital world for all.

Spyware: A New Threat to Privacy in Communication

*By Sofia Despoina Feizidou

The Athens Polytechnic uprising in November 1973 was the most massive anti-dictatorial protest and a precursor to the collapse of the military dictatorship regime imposed on the Greek people since April 21, 1963. Among other things, this regime had abolished fundamental rights.

One of the most critical fundamental rights is the right to the protection of correspondence, especially the confidentiality of communication. The right of an individual to share and exchange thoughts, ideas, feelings, news, and opinions within an intimate and confidential framework, with chosen individuals, without fear of private communication being monitored or any expression being revealed to third parties or used against them, is essential to democracy. Therefore, it is a fundamental individual right enshrined in international and European legislation, as well as in national Constitutions. The provision of Article 19 of the Greek Constitution dates back to 1975 (which may not be a coincidence).

However, the revelation of the surveillance of politicians or their relatives, actors, journalists, businessmen, and others one year ago shows that the protection of communication privacy remains vulnerable, especially in the modern digital age.

Spyware: A New Asset in the Arsenal of Intelligence Services and Companies

Spyware is a type of malware designed to secretly monitor a person's activities on their electronic devices, such as computers or mobile phones, without the end user's knowledge or consent. Spyware is typically installed on devices by opening an email or a file attachment. Once installed, it is difficult to detect, and even if detected, proving responsibility for the invasion is challenging. Spyware provides full and retroactive access to the user’s device, monitoring internet activity and gathering sensitive information and personal data, including files, messages, passwords, or credit card numbers. Additionally, it can capture screenshots or monitor audio and video by activating the device's microphone or camera.

Some of the most well-known spyware designed to invade and monitor mobile devices remotely include:

- Predator: This spyware is installed on the device when a user receives a message containing a link that appears normal and includes a catchy description to mislead the user into clicking on the link. Once clicked, the spyware is automatically installed, granting full access to the device, messages, files, as well as its camera and microphone.

- Pegasus: Similar to Predator, Pegasus aims to convince the user to click on a link, which then installs the spyware on the device. However, Pegasus can also be installed on a device without requiring any action from the user, such as a missed call on WhatsApp. Immediately after installation, it executes its operator's commands and gathers a significant amount of personal data, including files, passwords, text messages, call records, or the user’s location, leaving no trace of its existence on the device.

In June 2023, the Chairman of the European Parliament’s Committee of Inquiry investigating the use of Pegasus and similar surveillance spyware stated: "Spyware can be an effective tool in fighting crime, but when used wrongly by governments, it poses a significant risk to the rule of law and fundamental rights." Indeed, the technological capabilities of spyware provide unauthorized access to personal data and the monitoring of people's activities, leading to violations of the right to communication confidentiality, the right to the protection of personal data, and the right to privacy in general.

According to the Committee's findings, the abuse of surveillance spyware is widespread in the European Union. In addition to Greece, the use of such software has been found in Poland, Hungary, Spain, and Cyprus, which is deeply concerning. The need to establish a regulatory framework to prevent such abuse is now in the spotlight, not only at the national level but primarily at the EU level.

What Do We Need?

- Clear Rules to Prevent Abuse: European rules should clearly define how law enforcement authorities can use spyware. The use of spyware by law enforcement should only be authorized in exceptional cases, for a predefined purpose, and for a limited period of time. A common legal definition of the concept of 'national security reasons' should be established. The obligation to notify targeted individuals and non-targeted individuals whose data were accessed during someone else’s surveillance, as well as procedures for supervision and independent control following any incident of illegal use of such software, should also be enshrined.

- Compliance of National Legislation with European Court of Human Rights Case Law: The Court grants national authorities wide discretion in weighing the right to privacy against national security interests. However, it has developed and interpreted the criteria introduced by the European Convention of Human Rights, which must be met for a restriction on the right to confidential, free communication to be considered legitimate. This has been established in numerous judgments since 1978.

- Establishment of the "European Union Technology Laboratory": This independent research institute would be responsible for investigating surveillance methods and providing technological support, such as device screening and forensic research.

- Foreign Policy Dimension: Members of the European Parliament (MEPs) have called for a thorough review of spyware export licenses and more effective enforcement of the EU’s export control rules. The European Union should also cooperate with the United States in developing a common strategy on spyware, as well as with non-EU countries to ensure that aid provided is not used for the purchase and use of spyware.

Conclusion

In conclusion, as we reflect upon the lessons of history and the enduring struggle for democracy and fundamental rights, Benjamin Franklin's timeless wisdom resonates with profound significance: "They who can give up essential liberty to obtain a little temporary safety, deserve neither liberty nor safety." The recent revelations of spyware abuse have starkly illustrated the delicate balance between security and individual freedoms. While spyware may be wielded as a tool in the fight against crime, its potential for misuse poses a grave threat to the rule of law and the very principles upon which our democratic societies are built.

*Sofia-Despina Feizidou is a lawyer, and graduate of the Athens Law School, holding a Master's degree with specialization in "Law & Information and Communication Technologies" from the Department of Digital Systems of the University of Piraeus. Her thesis was on the comparative review of the case law of the European Courts (ECtHR and CJEU) on mass surveillance.

Drones & Artificial Intelligence at Greece’s high-tech borders

By Alexandra Karaiskou

In recent years, Greece has been investing significant funds and effort to modernize its border and migration management policy as part of the digitalization of its public sector. This modernization comes in a techno-solutionist shape and form which entails, as the term reveals, an enormous trust in technological solutions for long-standing and complex problems. In the field of border and immigration control, this includes the development, testing and deployment of advanced surveillance systems at the Greek borders, its refugee camps, and beyond to monitor unauthorized mobility, detect threats, and alert authorities for rapid intervention. While these technologies can bring noteworthy benefits, such as more coordinated and rapid responses in emergency situations that could prove critical for saving someone’s life, they also come with significant human rights risks. They can be also used to conduct more frequent and ‘effective’ pushbacks further away from the borders in more invisible and indetectable ways. Significant risks also exist for the rights to privacy, data protection, non-discrimination, and due process, which are undoubtedly among the first to be adversely impacted by the rollout of such technologies.

Although this techno-solutionist trend is not solely a Greek but a global phenomenon, its concretization in the Greek geo-political sphere, a country which manages a considerable portion of Europe’s south-east external borders, is illustrative of the future of European and Member States’ digital border and migration management policies. Technology has “become the ‘servant mistress of politics’”, as Bonditti has eloquently put it, which raises serious concerns about how these systems will be used in practice once deployed, given the systemic pushback and other non-entrée practices documented in the Aegean and Mediterranean seas. And while these technologies are being tested and deployed in a regulatory vacuum, the stakes become even higher.

REACTION: Greece’s latest border surveillance R&D project

As a country traditionally faced with important migratory and refugee inflows due to its geographical location, Greece has gained interest in research and innovation (R&D) projects developing border surveillance technologies. In the past 6 years, it has participated in more than nine EU co-funded projects as a research partner and/or a testing ground, including in the famous ROBORDER, CERETAB and AIDERS projects. In brief, these projects aim at developing various technologies, such as drones and other autonomous robotic vehicles equipped with multimodal cameras; automated early warning systems and innovative information exchange platforms; as well as algorithms (software) that can analyse real-time data and convert it into actionable information for national authorities. Their overall objective is to improve situational awareness around the borders and states’ interception capabilities and preparedness for emergency responses.

Having followed these developments closely from the outset, it came as no surprise when we saw yet another border surveillance project uploaded on the Greek Migration Ministry’s website last fall. This time it is REACTION, a project building on the findings of all the above projects, and co-funded by Greece, Cyprus, and the EU Integrated Border Management Fund. It aims at developing a next generation platform for border surveillance which can provide situational awareness at remote frontier locations as an efficient tool for rapid response to critical situations. Responding to irregular immigration, smuggling, human trafficking and, overall, transnational organised crime is described as the main driver behind the development of REACTION. The system will consist of several components, such as drones that can be used in swarm or solo formations, computer vision (via deep learning techniques), object recognition, identification and characterisation of events, early warning systems, as well as big data analytics. In simpler terms, software trained on machine learning techniques will analyse instantly the vast amounts of data collected by the drones and other sensors connected to the system and produce an alert identifying the type of incident and its coordinates. Depending on its design features, it may also offer additional tools to the authorities, such as the possibility to follow an unidentified object or person, zoom in and potentially cross-reference their distinctive features (ex. a person’s facial image) with data stored in existing databases. It is worth noting that REACTION will be interoperable with the servers of the Greek law enforcement authorities, and Reception and Identification Centres (RICs, i.e. refugee camps, analysed below), as well as EUROSUR, the EU border surveillance system operated by the EU Border and Coast Guard Agency, thereby drawing a wealth of information from various national and European sources.

Such systems come with the hope of revolutionising border management by elevating authorities’ detection and intervention capabilities to the next level. To what end, whether to save lives or let die, remains to be seen. Unfortunately, the country’s recent track record in emergency responses at the border, as evidenced by the recent shipwreck off Pylos and numerous other pushback incidents, leaves little room for optimism. Moreover, the deployment of such a tool might also undermine the right to privacy and data protection, among others, to the extent that anyone approaching the border could become a potential target of surveillance, whose sensitive biometric data could be unknowingly processed. It may still be soon to tell how this system will be implemented in the future, but the widening of power asymmetries between state authorities and vulnerable individuals along with the current state of practice paint a very alarming picture.

Hyperion & Centaur: the new surveillance systems at Greece’s refugee camps

Another area where new surveillance technologies are being piloted is Greece’s refugee camps. The Greek Ministry of Migration currently develops seven projects for the digitalisation of the asylum procedure and the camps’ management. One of them, Hyperion, which is expected to be deployed soon, will be the new management system of all the RICs, closed centres, and shelters. It will register asylum seekers’ personal data, both biographic (ex. full name, date and country of birth, nationality, etc.) and biometric (fingerprints), and will be the primary tool for controlling their entry in and exit from the camp by scanning their asylum seeker’s card and a fingerprint. It will also store information about most services provided to them, such as food, clothing, etc., and their transfers from one camp to another. In the near future, asylum seekers’ moves will be closely and continuously scrutinized by this system, as if they were inmates in high security prisons, which leads to highly intrusive practices that are difficult to justify. Besides surveillance, another objective of Hyperion is to enforce strict discipline to the state’s power: if someone, for instance, leaves the camp and does not return within the authorised time period, they could lose their access to the camp and to the rest of the services provided to them, leaving them homeless and without the most basic living conditions. Noteworthily, Hyperion will also be interoperable with an asylum seeker’s digital case file at the Asylum Service, which means that any alert in the system about a breach of the camp’s rules may have negative implications on their asylum claim. In the example above, unjustified absence from the camp could lead to the rejection of their asylum application, exposing them to the risk of detention and deportation.

In addition, Centaur is the new high-tech security management system of the camps that will automatically detect security breaches and alert the authorities. It consists of drones, optical and thermal cameras, microphones, metal detectors, and advanced motion detectors based on AI-powered behavioural analytics that monitor the internal and surrounding area of the camp. It is connected to a centralised control room in the Ministry’s headquarters in Athens and produces red flags whenever a security threat is detected, such as fights, unauthorized objects, fires, etc. From there, Ministry employees can zoom in and assess the risk, and instruct personnel on the ground on where and how to intervene. It has already been piloted at several camps and works complementarily to Hyperion by surveilling whatever move has been left unmonitored. In practice, the only places where people can enjoy some privacy is the bathrooms and to a certain extent the insides of their rooms. In the words of the 25-year-old refugee living in Samos camp: “there’s not a lot of difference between this camp and a prison”.

Although these systems have been promoted by the Greek authorities as efficient tools to ensure asylum seekers’ safety, this certainly comes at a high cost for privacy and fundamental rights. Besides their right to privacy, which is obviously seriously restricted, their right to non-discrimination or due process could be adversely affected, if the use of these systems leads to biased decisions or summary rejections of asylum applications. Moreover, their right to data protection is also severely compromised. Importantly, no Data Protection Impact Assessment seems to have been conducted, although required by the GDPR; and no regulatory framework has been adopted yet to govern the use (and potential misuse) of these systems, and mitigate these and other human rights risks. While the Ministry’s radio silence on these issues echoes loudly, we look forward to the findings of the Greek DPA’s investigation and trust them to ensure the protection of all persons’ rights in the digital era, regardless of where they are coming from.

Four steps to compliance today

Although the deadlines are further down the line, affected organizations do not have to sit and wait. Time (and money) is precious when preparing to achieve compliance with the NIS2 and DORA requirements. Organizations must assess and identify actions they can take to prepare for the new rules.

The following recommendations are a good starting point:

Governance and risk management: Understand the new requirements and evaluate the current governance and risk management processes. Additionally, consider increasing funding for programs that help detect threats and incidents and strengthening enterprise-wide cybersecurity awareness training initiatives.

Incident reporting: Evaluate the maturity of incident management and reporting to understand current state capabilities and gauge awareness of the various cybersecurity incident reporting standards relevant to your industry. You should also check your ability to recognize near-miss situations.

Resilience testing: Recognize the talents needed to design and carry out resilience testing, including board member training sessions on the techniques used and their implications for repair.

The Challenge of Complying with New EU Legislative Security Requirements

Γράφουν οι Αναστάσιος Αραμπατζής και Λευτέρης Χελιουδάκης

Τα τελευταία χρόνια, ο αριθμός των πρωτοβουλιών ψηφιακής πολιτικής σε επίπεδο ΕΕ έχει διευρυνθεί. Έχουν ήδη υιοθετηθεί πολλές νομοθετικές προτάσεις που καλύπτουν τις τεχνολογίες πληροφοριών και επικοινωνιών (ΤΠΕ) και επηρεάζουν τα δικαιώματα και τις ελευθερίες των ανθρώπων στην ΕΕ, ενώ άλλες παραμένουν υπό διαπραγμάτευση. Οι περισσότερες από αυτές τις νομοθετικές πράξεις είναι καίριας σημασίας και αφορούν ένα ευρύ φάσμα πολύπλοκων θεμάτων, όπως η τεχνητή νοημοσύνη, η διακυβέρνηση δεδομένων, η προστασία της ιδιωτικής ζωής και η ελευθερία της έκφρασης στο διαδίκτυο, η πρόσβαση των αρχών επιβολής του νόμου σε ψηφιακά δεδομένα, η ηλεκτρονική υγεία και η κυβερνοασφάλεια.

Οι φορείς της κοινωνίας των πολιτών χρειάζονται συχνά βοήθεια για να παρακολουθήσουν αυτές τις πολιτικές πρωτοβουλίες της ΕΕ, ενώ οι επιχειρήσεις αντιμετωπίζουν σοβαρές προκλήσεις στην κατανόηση της πολύπλοκης νομικής γλώσσας των νομοθετικών απαιτήσεων. Το παρόν άρθρο αποσκοπεί στην ευαισθητοποίηση σχετικά με δύο πρόσφατα εκδοθείσες νομοθεσίες της ΕΕ για την ασφάλεια στον κυβερνοχώρο, και συγκεκριμένα την Πράξη για την Ψηφιακή Επιχειρησιακή Ανθεκτικότητα (DORA) και την αναθεωρημένη έκδοση της Οδηγίας για την Ασφάλεια Δικτύων και Πληροφοριών (NIS2).

NIS2

Η NIS2 αποτελεί την αναγκαία απάντηση στο διευρυμένο τοπίο που απειλεί τις κρίσιμες ευρωπαϊκές υποδομές.

Η αρχική έκδοση της Oδηγίας εισήγαγε διάφορες υποχρεώσεις για την εθνική εποπτεία των φορέων εκμετάλλευσης βασικών υπηρεσιών (ΦΕΒΥ) και των παρόχων ψηφιακών υπηρεσιών (ΠΨΥ). Για παράδειγμα, τα κράτη μέλη της ΕΕ πρέπει να εποπτεύουν το επίπεδο κυβερνοασφάλειας των φορέων εκμετάλλευσης σε κρίσιμους τομείς, όπως η ενέργεια, οι μεταφορές, το νερό, η υγειονομική περίθαλψη, οι ψηφιακές υποδομές, οι τράπεζες και οι υποδομές της χρηματοπιστωτικής αγοράς. Επιπλέον, τα κράτη μέλη πρέπει να εποπτεύουν τους παρόχους κρίσιμων ψηφιακών υπηρεσιών, συμπεριλαμβανομένων των διαδικτυακών αγορών, των υπηρεσιών υπολογιστικού νέφους και των μηχανών αναζήτησης.

Για το λόγο αυτό, τα κράτη μέλη της ΕΕ έπρεπε να δημιουργήσουν αρμόδιες εθνικές αρχές που θα είναι επιφορτισμένες με αυτά τα εποπτικά καθήκοντα. Επιπλέον, η NIS εισήγαγε διαύλους για τη διασυνοριακή συνεργασία και ανταλλαγή πληροφοριών μεταξύ των κρατών μελών της ΕΕ.

Ωστόσο, η ψηφιοποίηση των υπηρεσιών και το αυξημένο επίπεδο των κυβερνοεπιθέσεων σε ολόκληρη την ΕΕ οδήγησαν την Ευρωπαϊκή Επιτροπή το 2020 να προτείνει μια αναθεωρημένη έκδοση της NIS, δηλαδή τη NIS2. Η νέα οδηγία τέθηκε σε ισχύ στις 16 Ιανουαρίου 2023 και τα κράτη μέλη έχουν πλέον 21 μήνες, έως τις 17 Οκτωβρίου 2024, για να μεταφέρουν τα μέτρα της στο εθνικό τους δίκαιο.

Οι νέες διατάξεις έχουν διευρύνει το πεδίο εφαρμογής της NIS με σκοπό την ενίσχυση των απαιτήσεων ασφαλείας που επιβάλλονται στα κράτη μέλη της ΕΕ, τον εξορθολογισμό των υποχρεώσεων αναφοράς περιστατικών ασφαλείας και τη θέσπιση ισχυρότερων εποπτικών μέτρων και αυστηρότερων απαιτήσεων τήρησης της νομοθεσίας, όπως για παράδειγμα ένα εναρμονισμένο καθεστώς κυρώσεων για όλα τα κράτη μέλη της ΕΕ.

Η NIS2 εισάγει τα ακόλουθα στοιχεία:

- Διευρυμένη εφαρμογή: Η NIS2 αυξάνει τον αριθμό των τομέων που καλύπτουν οι διατάξεις της, συμπεριλαμβανομένων των ταχυδρομικών υπηρεσιών, των κατασκευαστών αυτοκινήτων, των πλατφορμών των μέσων κοινωνικής δικτύωσης, της διαχείρισης αποβλήτων, της παραγωγής χημικών προϊόντων και αγροτικών προϊόντων διατροφής. Οι νέοι κανόνες ταξινομούν τις οντότητες σε “βασικές οντότητες” και “σημαντικές οντότητες” και ισχύουν για τους υπεργολάβους και τους παρόχους υπηρεσιών που δραστηριοποιούνται στους καλυπτόμενους τομείς.

- Αυξημένη ετοιμότητα για τις παγκόσμιες απειλές στον κυβερνοχώρο: Η NIS2 επιδιώκει να ενισχύσει τη συλλογική επίγνωση της κατάστασης μεταξύ των βασικών οντοτήτων για τον εντοπισμό και την κοινοποίηση σχετικών απειλών πριν αυτές επεκταθούν σε όλα τα κράτη μέλη. Για παράδειγμα, το δίκτυο EU-CyCLONe θα βοηθήσει στον συντονισμό και τη διαχείριση περιστατικών μεγάλης κλίμακας, ενώ θα δημιουργηθεί ένας εθελοντικός μηχανισμός αμοιβαίας μάθησης για την ενίσχυση της ευαισθητοποίησης.

- Εξορθολογισμένα πρότυπα ανθεκτικότητας με αυστηρότερες κυρώσεις. Σε αντίθεση με τη NIS, η NIS2 προβλέπει υψηλότατες κυρώσεις και ισχυρά μέτρα ασφαλείας. Για παράδειγμα, οι παραβάσεις της νομοθεσίας από βασικές οντότητες θα υπόκεινται σε διοικητικά πρόστιμα μέγιστου ύψους τουλάχιστον 10 εκατ. ευρώ ή 2% του συνολικού παγκόσμιου ετήσιου κύκλου εργασιών τους, ενώ οι σημαντικές οντότητες θα υπόκεινται σε πρόστιμα μέγιστου ύψους τουλάχιστον 7 εκατ. ευρώ ή 1,4 % του συνολικού παγκόσμιου ετήσιου κύκλου εργασιών τους.

- Εξορθολογισμένες διαδικασίες αναφορών. Η NIS2 εξορθολογίζει τις υποχρεώσεις υποβολής αναφορών ώστε να αποφευχθεί η πρόκληση υπερβολικής υποβολής και η δημιουργία υπερβολικού φόρτου για τις καλυπτόμενες οντότητες.

- Διευρυμένο εδαφικό πεδίο εφαρμογής: Σύμφωνα με τους νέους κανόνες, συγκεκριμένες κατηγορίες οντοτήτων που δεν είναι εγκατεστημένες στην Ευρωπαϊκή Ένωση αλλά προσφέρουν υπηρεσίες εντός αυτής θα υποχρεούνται να ορίζουν αντιπρόσωπο στην ΕΕ.

DORA

Η πράξη για τη Ψηφιακή Επιχειρησιακή Ανθεκτικότητα (DORA) αντιμετωπίζει ένα θεμελιώδες πρόβλημα στο χρηματοπιστωτικό οικοσύστημα της ΕΕ: πώς ο τομέας μπορεί να παραμείνει ανθεκτικός κατά τη διάρκεια σοβαρών επιχειρησιακών διαταραχών. Πριν από την DORA, τα χρηματοπιστωτικά ιδρύματα χρησιμοποιούσαν την κατανομή κεφαλαίου για τη διαχείριση των σημαντικών κατηγοριών λειτουργικού κινδύνου. Ωστόσο, πρέπει να αντιμετωπίσουν καλύτερα τις προκλήσεις που ανακύπτουν για την ενίσχυση της κυβερνοσφασάλειας τους και να ενσωματώσουν πρακτικές που θα ενισχύσουν την ανθεκτικότητα τους έναντι ενός εξελισσόμενου τοπίου απειλών στο ευρύτερο επιχειρησιακό πλαίσιο τους.

Το δελτίο τύπου του Ευρωπαϊκού Συμβουλίου παρέχει μια περιεκτική δήλωση του σκοπού της Πράξης για την ψηφιακή επιχειρησιακή ανθεκτικότητα:

«Η πράξη DORA καθορίζει ενιαίες απαιτήσεις για την ασφάλεια των συστημάτων δικτύου και πληροφοριών των εταιρειών και οργανισμών που δραστηριοποιούνται στον χρηματοπιστωτικό τομέα, καθώς και των κρίσιμων τρίτων παρόχων υπηρεσιών ΤΠΕ (τεχνολογίες πληροφοριών και επικοινωνιών), όπως πλατφόρμες υπολογιστικού νέφους ή υπηρεσίες ανάλυσης δεδομένων».

Με άλλα λόγια, η DORA δημιουργεί ένα ομοιογενές κανονιστικό πλαίσιο για την ψηφιακή επιχειρησιακή ανθεκτικότητα, ώστε να διασφαλιστεί ότι όλες οι χρηματοπιστωτικές οντότητες μπορούν να προλαμβάνουν και να μετριάζουν τις απειλές στον κυβερνοχώρο.

Σύμφωνα με το άρθρο 2 του κανονισμού, η DORA εφαρμόζεται σε χρηματοπιστωτικές οντότητες, συμπεριλαμβανομένων τραπεζών, ασφαλιστικών επιχειρήσεων, επιχειρήσεων επενδύσεων και παρόχων υπηρεσιών κρυπτοστοιχείων. Ο κανονισμός καλύπτει επίσης κρίσιμα τρίτα μέρη που προσφέρουν σε χρηματοπιστωτικές εταιρείες υπηρεσίες ΤΠΕ και κυβερνοασφάλειας.

Επειδή η DORA είναι κανονισμός και όχι οδηγία, είναι εκτελεστή και ισχύει άμεσα σε όλα τα κράτη μέλη της ΕΕ από την ημερομηνία εφαρμογή της. H DORA συμπληρώνει την Oδηγία NIS2 και αντιμετωπίζει πιθανές επικαλύψεις ως ειδικό δίκαιο (“lex specialis”).

Η συμμόρφωση με τη DORA αναλύεται σε πέντε πυλώνες που καλύπτουν ποικίλες πτυχές της πληροφορικής και της κυβερνοασφάλειας, παρέχοντας στις χρηματοπιστωτικές επιχειρήσεις μια εμπεριστατωμένη βάση για την ψηφιακή ανθεκτικότητα.

- Διαχείριση κινδύνων ΤΠΕ: Οι διαδικασίες εσωτερικής διακυβέρνησης και ελέγχου διασφαλίζουν την αποτελεσματική και συνετή διαχείριση κινδύνων ΤΠΕ.

- Διαχείριση, ταξινόμηση και αναφορά περιστατικών που σχετίζονται με τις ΤΠΕ: Ανίχνευση, διαχείριση και προειδοποίηση περιστατικών που σχετίζονται με ΤΠΕ, με τον καθορισμό, την καθιέρωση και την εφαρμογή μιας διαδικασίας αντιμετώπισης και διαχείρισης περιστατικών κυβερνοασφάλειας.

- Έλεγχος ψηφιακής επιχειρησιακής ανθεκτικότητας: Αξιολόγηση της ετοιμότητας για τη διαχείριση περιστατικών κυβερνοασφάλειας, εντοπισμός ατελειών, ελλείψεων και κενών στην ψηφιακή επιχειρησιακή ανθεκτικότητα και ταχεία εφαρμογή διορθωτικών μέτρων.

- Διαχείριση του κινδύνου ΤΠΕ από τρίτους: Πρόκειται για αναπόσπαστο στοιχείο του κινδύνου κυβερνοασφάλειας εντός του πλαισίου διαχείρισης κινδύνου ΤΠΕ.

- Ανταλλαγή πληροφοριών: Ανταλλαγή πληροφοριών σχετικά με απειλές στον κυβερνοχώρο, συμπεριλαμβανομένων δεικτών συμβιβασμού, τακτικών, τεχνικών και διαδικασιών (TTP) και ειδοποιήσεων για την κυβερνοασφάλεια, για την ενίσχυση της ανθεκτικότητας των χρηματοπιστωτικών οντοτήτων.

Σύμφωνα με το άρθρο 64, ο κανονισμός τέθηκε σε ισχύ στις 17 Ιανουαρίου 2023 και εφαρμόζεται από τις 17 Ιανουαρίου 2025. Είναι επίσης σημαντικό να σημειωθεί ότι το άρθρο 58 ορίζει ότι έως τις 17 Ιανουαρίου 2026, η Ευρωπαϊκή Επιτροπή θα επανεξετάσει “την καταλληλότητα των ενισχυμένων απαιτήσεων για τους νόμιμους ελεγκτές και τα ελεγκτικά γραφεία όσον αφορά την ψηφιακή επιχειρησιακή ανθεκτικότητα”.

Τέσσερα βήματα για τη συμμόρφωση σήμερα

Παρόλο που οι προθεσμίες είναι πιο μακριά, οι επηρεαζόμενοι οργανισμοί δεν χρειάζεται να κάθονται και να περιμένουν. Ο χρόνος (και το χρήμα) είναι πολύτιμος όταν προετοιμάζεστε για την συμμόρφωση με τις απαιτήσεις των NIS2 και DORA. Οι οργανισμοί πρέπει να αξιολογήσουν και να προσδιορίσουν τις ενέργειες που μπορούν να προβούν για να προετοιμαστούν για τους νέους κανόνες.

Οι ακόλουθες συστάσεις αποτελούν ένα καλό σημείο εκκίνησης:

- Διακυβέρνηση και διαχείριση κινδύνων: Κατανοήστε τις νέες απαιτήσεις και αξιολογήστε τις τρέχουσες διαδικασίες διακυβέρνησης και διαχείρισης κινδύνων. Επιπλέον, εξετάστε το ενδεχόμενο να αυξήσετε τη χρηματοδότηση για προγράμματα που βοηθούν στον εντοπισμό απειλών και περιστατικών κυβερνοεπιθέσεων και να ενισχύσετε τις πρωτοβουλίες εκπαίδευσης για την ευαισθητοποίηση σε θέματα κυβερνοασφάλειας σε επίπεδο επιχείρησης.

- Αναφορά περιστατικών: Αξιολογήστε την ωριμότητα της διαχείρισης συμβάντων και της υποβολής εκθέσεων για να κατανοήσετε τις τρέχουσες δυνατότητες και να μετρήσετε την ευαισθητοποίηση σχετικά με τα διάφορα πρότυπα υποβολής εκθέσεων συμβάντων κυβερνοασφάλειας που αφορούν τον κλάδο σας. Θα πρέπει επίσης να ελέγξετε την ικανότητά σας να αναγνωρίζετε καταστάσεις ατυχημάτων που αποφεύγονται την τελευταία στιγμή.

- Δοκιμή ανθεκτικότητας: Αναγνώριση των ταλέντων που απαιτούνται για το σχεδιασμό και τη διεξαγωγή δοκιμών ανθεκτικότητας, συμπεριλαμβανομένων εκπαιδευτικών συνεδρίων για τα μέλη του διοικητικού συμβουλίου σχετικά με τις τεχνικές που χρησιμοποιούνται και τις επιπτώσεις τους.

- Διαχείριση κινδύνων από τρίτους: Για να βοηθήσετε στη δημιουργία ενός σχεδίου περιορισμού των κινδύνων, επικεντρωθείτε στην ενίσχυση της χαρτογράφησης των συμβάσεων και στην αξιολόγηση των τρωτών σημείων τρίτων μερών. Αναγνωρίστε τις υπηρεσίες που είναι απαραίτητες για τη φιλοξενία θεμελιωδών επιχειρηματικών διαδικασιών. Ελέγξτε αν έχει εφαρμοστεί μια αρχιτεκτονική ανοχής σε σφάλματα για να μειωθούν οι επιπτώσεις της διακοπής λειτουργίας κρίσιμων παρόχων.

Το άρθρο αυτό εκπονήθηκε στο πλαίσιο του έργου “Increasing Civic Engagement in the Digital Agenda — ICEDA” με την υποστήριξη της Ευρωπαϊκής Ένωσης και του South East Europe (SEE) Digital Rights Network. Το περιεχόμενο αυτού του άρθρου δεν θα πρέπει να θεωρείται ότι αποτελεί επίσημη θέση της Ευρωπαϊκής Ένωσης ή του SEΕ.

Photo by FLY:D on Unsplash

Raising Awareness Is Critical for Privacy and Data Protection

By Anastasios Arampatzis

Many believe cybersecurity and privacy are about emerging technologies, processes, hackers, and laws. Partially this is true. Technology is pervasive and has changed drastically how we live, work and communicate. High-profile data breaches make the news headlines more frequently than not, and businesses are fined enormous penalties for breaking security and privacy laws.

However, they must remember the most important pillar of data protection and privacy; the human element. Hymans create and use technology, and it is humans who even develop the regulations that govern a respectful and ethical use of technology. What is more, humans mostly feel the impact of data breaches. The human element is also responsible for the majority of data breaches. The Verizon Data Breach Investigations Report highlights that humans are responsible for 82% of successful data breaches.

If this percentage seems high, imagine that many security professionals argue that it is instead closer to 100%. Flawed applications, for example, are the artifact of humans. People manufacture insecure Internet of Things (IoT) devices. And it is humans that choose weak passwords or reuse passwords across multiple applications and platforms.

This is not to imply that we should accuse people of being “the weakest link” in cybersecurity and privacy. On the contrary, these thoughts underline the importance of individuals in preserving a solid security and privacy posture. This demonstrates how essential it is to create a security and privacy culture. Raising awareness about threats and best practices becomes the foundation of a safer digital future.

Data Threats Awareness

Our data is collected daily — your computer, smartphone, and almost every internet-connected device gather data. When you download a new app, create a new account, or join a new social media platform, you will often be asked to provide access to your personal information before you can even use it! This data might include your geographic location, contacts, and photos.

For these businesses, this personal information about you is of tremendous value. Companies use this data to understand their prospects better and launch targeted marketing campaigns. When used properly, the data helps companies better understand the needs of their customers. It serves as the basis for personalization, improving customer service, and creating customer value. They help to understand what works and what doesn’t. They also form the basis for automated and repeatable marketing processes that help companies evolve their operations.

In an article from May 2017, The Economist defined the data industry as the new oil industry. According to LSE Business Review, advertisements accounted for 92% of Facebook’s revenue and above 90% of Google’s revenue. This revenue is equal to approximately 60 billion $.

This is the point where things derail. Businesses store personal data indefinably. They use data to make inferences about your socioeconomic status, demographic information, and preferences. The Cambridge Analytica scandal was a great manifestation of how companies can manipulate our beliefs based on the psychographic profiles created by harvesting vast amounts of “innocent” personal data. Companies do not always use your data to your interest or according to your consent. Google, Apple, Facebook, Amazon, and Microsoft generate value by exploiting them, selling them (for example, via a data broker), or exchanging them for other data.

Besides the threats originating from the misuse of our data by legitimate businesses, there is always the danger coming from malicious actors who actively seek to spot gaps in data protection measures. The same Verizon report indicates that personal data are the target in 76% of data breach incidents. The truth is that data is valuable to criminals as well.

According to Keeper Security, criminals sell your stolen data in the dark web market, doing a profitable business. A Spotify account costs $2.75, a Netflix account up to $3.00, a driver’s license $20.00, a credit card up to $22.00, and a complete medical record $1.000! Now multiply these prices per unit by the million records compromised yearly, and you have a sense of the booming cybercrime economy.

Privacy Best Practices Awareness

If this reality is sending chills down your spine, don’t fret! You can take steps to control how your data is shared. You can’t lock down all your data — even if you stop using the internet, credit card companies and banks record your purchases. But you can take simple steps to manage it and take more control of whom you share it with.

First, it is best to understand the tradeoff between privacy and convenience. Consider what you get in return for handing over your data, even if the service is free. You can make informed decisions about sharing your data with businesses or services. Here are a few considerations:

-Is the service, app, or game worth your personal data?

-Can you control your privacy and still use the service?

-Is the data requested relevant to the app or service?

-If you last used an app several months ago, is it worth keeping it, knowing that it might be collecting and sharing your data?

You can adjust the privacy settings to your comfort level based on these considerations. Check the privacy and security settings for every app, account, or device. These should be easy to find in the Settings section and usually require a few minutes to change. Set them to your comfort level for personal information sharing; generally, it’s wise to lean on sharing less data, not more. You don’t have to adjust the privacy settings for every account at once; start with some apps, which will become a habit over time.

Another helpful habit is to clear your cookies. We’ve all clicked “accept cookies” and have yet to learn what it means. Regularly clearing cookies from your browser will remove certain information placed on your device, often for advertising purposes. However, cookies can pose a security risk, as hackers can easily hijack these files.

Finally, you can try privacy-protecting browsers. Looking after your online privacy can feel complicated, but specific internet browsers make the task easier. Many browsers depreciate third-party cookies and have strong privacy settings by default. Changing browsers is simple but can be very effective for protecting your privacy.

Data Protection Best Practices Awareness

Data privacy and data protection are closely related. Besides managing your data privacy settings, follow some simple cybersecurity tips to keep it safe. The following four steps are fundamental for creating a solid data protection posture.

-Create long (at least 12 characters) unique passwords for each account and device. Use a password manager to store all your passwords. Maintaining dozens of passwords securely is easier than ever, and you only need to remember one password.

-Turn on multifactor authentication (MFA) wherever permitted, even on apps that are about football or music. MFA can help prevent a data breach even if your password is compromised.

-Do not deactivate the automatic updates that come as a default with many software and apps. If you choose to do it manually, make sure you install these updates as soon as they are available.

-Do not click on links or attachments included in phishing messages. You can learn how to spot these emails or SMS by looking closely at the content and the sender’s address. If they promote urgency and fear or seem too good to be true, they are probably trying to trick you. Better safe than sorry.

This article was prepared as part of the project “Increasing Civic Engagement in the Digital Agenda — ICEDA” with the support of the European Union and South East Europe (SEE) Digital Rights Network. The content of this article in no way reflects the views of the European Union or the SEE Digital Rights Network.