Online Shopping: Do we all pay the same price for the same product?

Written by Eirini Volikou*

On the same day, three people enter a book store looking to buy the book The secrets of the Internet. The book retails for €20 but the price is not indicated. The bookseller charges the first customer to walk in – a well-dressed man holding a leather briefcase and the new iPhone – €25 for the book. A while later a regular customer arrives. The bookseller knows that he is a student with poor finances and sells the book to him for €15. Finally, the third customer shows up; a woman who seems to be in a hurry to make the purchase. She ends up purchasing The secrets of the Internet at the price of €23. None of the customers is aware that each one paid a different price because the bookseller used information he deduced from their “profiles” to deduce their buying power or willingness.

In our capacity as consumers, how would we describe such a practice?

Is it fair or unfair, correct or wrong, lawful or unlawful?

And how would we react were we made aware that it targets us as well?

The above scenario may be completely fictitious but could it materialize in the real world?

In principle, the conditions in “regular” commerce are not such as to allow the materialization of our scenario. The obligation to clearly indicate the prices of products in brick and mortar stores directly and dramatically limits the traders’ freedom in charging prices higher than those indicated based on each individual client. In e-commerce, though, reality can prove to be strikingly similar to our fictitious scenario.

The e-commerce reality aka the “bookseller” is alive

Not infrequently it is observed that the indicated price of a good or a service on e-commerce platforms is not the same for everyone but divergences occur depending on the profile of the future customer.

Our online profiles are composed of information such as gender, age, marital status, type and number of devices we use to connect to the internet, geographical location (country or even neighborhood), nationality, preferences and consumer habits (history of searches or purchases) and so on.

This information is made known to the websites we visit via cookies, our IP address, and our user log-in information and can be used not only to show results and advertisements relevant to us but also to draw conclusions about our purchasing power or willingness. In other words, the role of the fictitious bookseller play algorithms that use our profiles for the purpose of categorizing us and automatically calculating and presenting to us a final price that we would be prepared to pay for the subject of our search.

This price may differ between users or categories of users who are often unaware of such categorisation or of the price they would be asked to pay had the platform had no access to their personal data.

This practice is often referred to as personalised pricing or price discrimination.

It is not to be confused with dynamic pricing in which the price is adjusted based on criteria that are not relevant to any individual customer but based on criteria relevant to the market, such as supply and demand.

The issue of personalized pricing surfaced in the public debate in the early 2000s when regular Amazon users noticed that by deleting or blocking the relevant cookies from their devices – which meant being regarded as new users by the platform – they could purchase DVDs at lower prices. The widespread use of e-commerce in combination with the rampant collection and processing of our personal data online (profiling, data scraping, Big Data) has since paved the way for the facilitation and spread of personalized pricing.

The experiment aka the “bookseller” in action

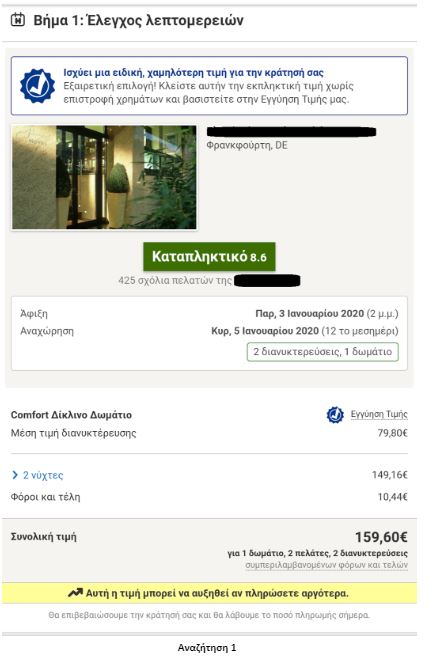

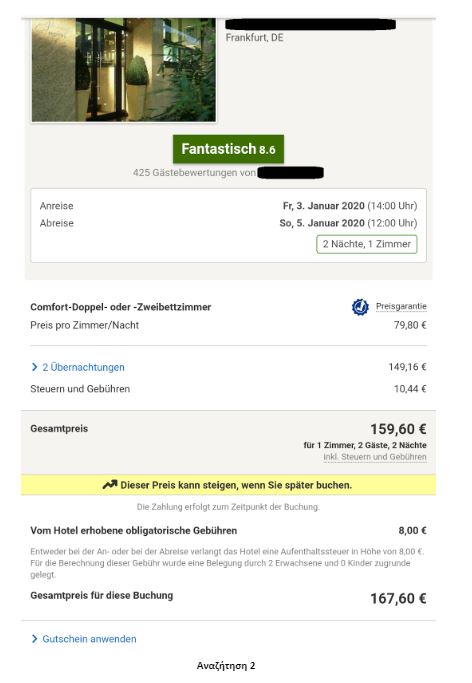

To put the practice to the test, consecutive searches and price comparisons were conducted on the same day (17/12/2019) for exactly the same room type (a “comfort” double room with one double or two twin beds) with breakfast for 2 travelers at the same hotel in Frankfurt, Germany on 3-5 January 2020. The searches were conducted on two different hotel booking websites and in all instances they reflect the lowest, non-refundable price option. The results are shown below:

Website Α

| Device | Browsing method | Website version | Price in € | |

| 1 | Τablet | Browser | Greek | 159,60 |

| 2 | Tablet | Browser | German | 167,60 |

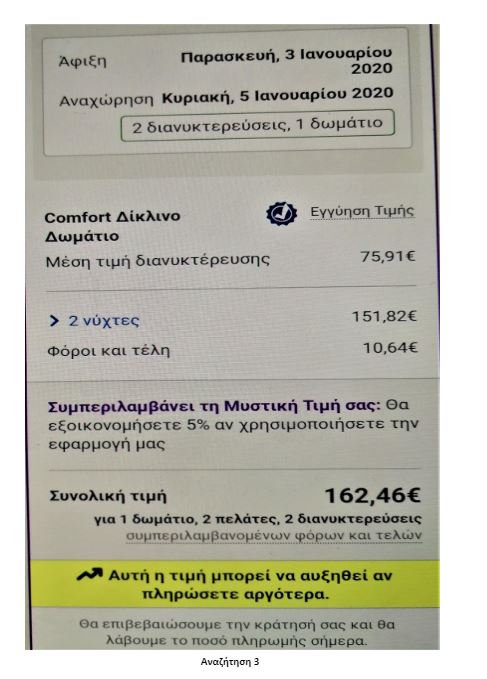

| 3 | Tablet | Application | Greek | 162,46 |

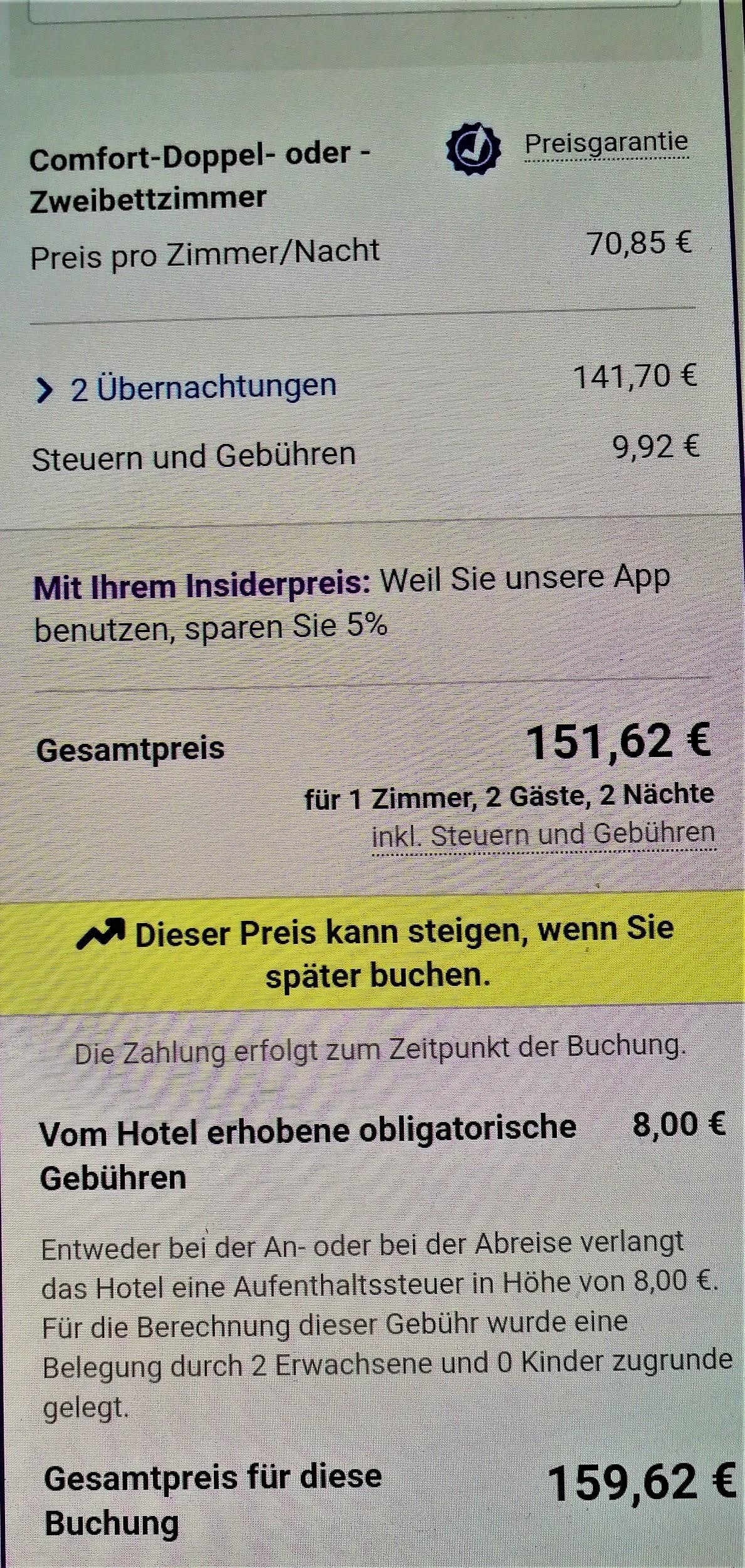

| 4 | Tablet | Application | German | 159,62 |

Website Β

| Device | Browsing method | Website version | Price in € | |

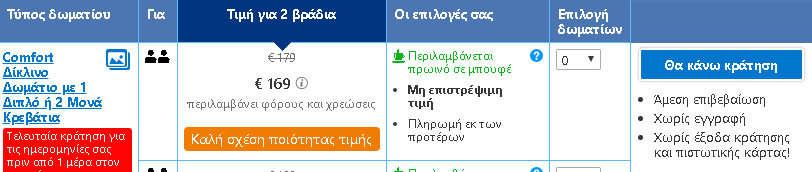

| 5 | Laptop | Browser | Greek | 169,00 |

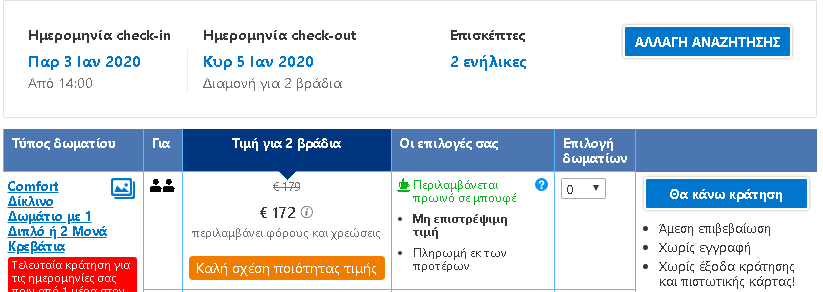

| 6 | Laptop | Incognito browser | Greek | 172,00 |

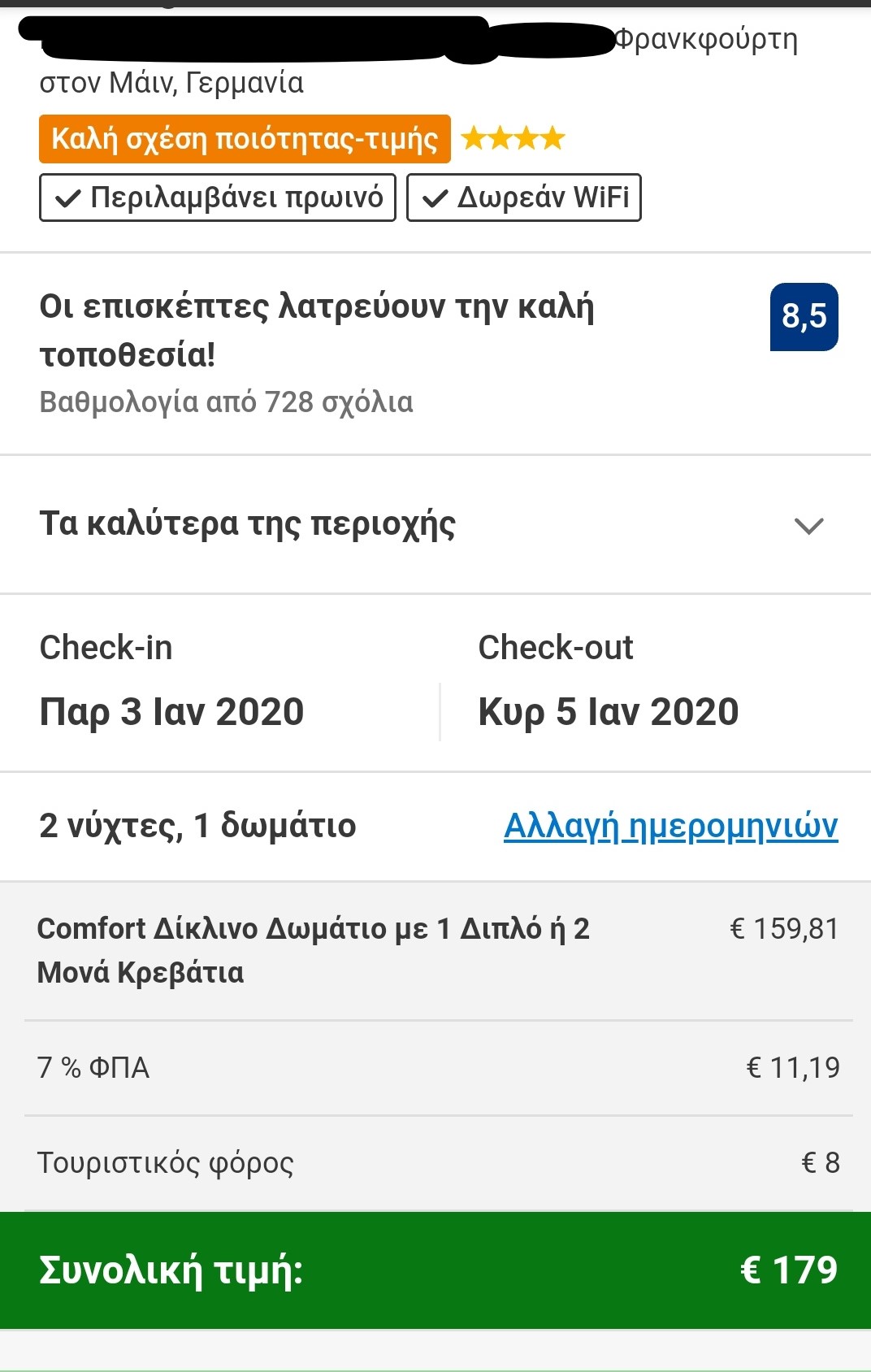

| 7 | Smartphone | Browser | Greek | 179,00 |

Search 1

Search 2

Search 3

Search 4

Search 5

Search 6

Search 7

The search results show a range of different prices for the same room on the same dates.

There is a noteworthy price divergence depending on the consumer’s country or location. Even for the same country, though, the prices differ considerably depending on the type of device combined with the browsing method used.

All this information – or even the lack thereof in the case of incognito browsing – appears to play a role in the final price calculation and, hence, in the customized pricing for the users.

How is personalized pricing dealt with?

Personalised pricing, arguably, brings advantages especially for consumers with low purchasing power who benefit from lower prices or discounts and can have access to products or services that they could otherwise not afford. However, consumer categorization and price differentiation give rise to concerns because they are processes mostly unknown to consumers and obscure as to the specific criteria they employ.

At the same time, the use of parameters like the ones that appeared to influence the price in the hotel room experiment, i.e. country/language, device, and browsing method, does not guarantee a classification of purchasing power that corresponds to reality. What is more, research has shown that consumers, when made aware of the application of personalized pricing, reject this practice by a majority as unfair or unacceptable. This is largely true also in the event that personalized pricing would benefit them if they were also made aware that such benefit requires the collection of their data and the monitoring of their online or offline behavior.

When it comes to the legal approach to personalised pricing, different fields of law are concerned by it.

In the framework of data protection law, the General Data Protection Regulation (GDPR) does not explicitly regulate personalized pricing. Nevertheless, it provides that should an undertaking be using personal data (including IP address, location, the cookies stored in a device, etc.) it is obliged to inform about the purposes for which they are being used.

It follows that, if consumer profiles are being used for the calculation of the final price of a good or service, this should, at the very least, be mentioned in the privacy policy of the e-commerce platform.

The GDPR also requires consumer consent if personalized pricing has been based on sensitive personal data or if personal data is used in automated decision-making concerning the consumer.

From a consumer protection law perspective, traders can, in principle, set the prices for their goods or services freely insofar as they duly inform consumers about these prices or the manner in which they have been calculated. Personalized pricing could be prohibited if applied in combination with unfair commercial practices provided for in the text of the relevant Directive 2005/29/EC.

However, recently adopted Directive (EU) 2019/2161, which aims to achieve the better enforcement and modernization of Union consumer protection rules, will hopefully contribute to limiting consumer uncertainty and improving their position.

With the amendments that this Directive brings about not only is personalized pricing recognized as a practice but also the obligation is established to inform consumers when the price to be paid has been personalized on the basis of automated decision-making so they can take the potential risks into consideration in their purchasing decision (Recital 45 and Article 4(4) of the Directive). The Directive was published in the Official Journal of the EU on 18/12/2019 and the Member States should apply the measures transposing it by 28/5/2022.

It should be noted, albeit, in brief, that personalized pricing is an issue that competition law is also concerned with and, in particular, the possibility of charging higher prices to specific consumer categories for reasons not related to costs or for utilities by firms that hold a dominant position in a market.

In any case, staying protected online is also a personal matter.

At the Roadmap to Safe Navigation (in Greek) issued by Homo Digitalis, there can be found the behaviors we can adopt to protect our personal data while navigating through the Internet.

Consumers can adopt behaviors that help protect their personal data while browsing, such as using a virtual private network (VPN), opting for browsers and search engines that do not track users and using email and chat services that offer end-to-end encryption. To deal with personalized pricing, in particular, thorough market research, price comparison on different websites, or even different language versions of a single website, trying different browsing methods and, if possible, different devices can be a good start. It is important for e-commerce users to stay informed and alert in order to avoid having their profile used as a tool towards charging higher prices.

*Eirini Volikou, LL.M is a lawyer specializing in European and European Competition Law. She has extensive experience in the legal training of professionals on Competition Law, having worked as Deputy Head of the Business Law Section at the Academy of European Law (ERA) in Germany and, currently, as Course Co-ordinator with Jurisnova Association of the NOVA Law School in Lisbon.

SOURCES

1) European Commission – Consumer market study on online market segmentation through personalized pricing/offers in the European Union (2018)

2) Directive (EU) 2019/2161 of the European Parliament and of the Council of 27 November 2019 amending Council Directive 93/13/EEC and Directives 98/6/EC, 2005/29/EC and 2011/83/EU of the European Parliament and of the Council as regards the better enforcement and modernisation of Union consumer protection rules

3) Directive 2005/29/EC of the European Parliament and of the Council of 11 May 2005 concerning unfair business-to-consumer commercial practices in the internal market and amending Council Directive 84/450/EEC, Directives 97/7/EC, 98/27/EC and 2002/65/EC of the European Parliament and of the Council and Regulation (EC) No 2006/2004 of the European Parliament and of the Council (‘Unfair Commercial Practices Directive’)

4) Commission Staff Working Document – Guidance on the implementation/application of Directive 2005/29/EC on unfair commercial practices [SWD(2016) 163 final]

5) Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation)

6) Poort, J. & Zuiderveen Borgesius, F. J. (2019). Does everyone have a price? Understanding people’s attitude towards online and offline price discrimination. Internet Policy Review, 8(1)

7) OECD, ‘Personalised Pricing in the Digital Era’, Background Note by the Secretariat for the joint meeting between the OECD Competition Committee and the Committee on Consumer Policy on 28 November 2018 [DAF/COMP(2018)13]

8) BEUC, ‘Personalised Pricing in the Digital Era’, Note for the joint meeting between the OECD Competition Committee and the Committee on Consumer Policy on 28 November 2018 [DAF/COMP/WD(2018)129]9) http://news.bbc.co.uk/2/hi/business/914691.stm

Homo Digitalis becomes full EDRi member

Today Homo Digitalis became full member of European Digital Rights (EDRi), the most prominent digital rights organizations coalition in the world!

We are the first Greek organization to make it and we are extremely happy to be representing our country.

Homo Digitalis had been an observer-member to EDRi since November 2018. Our actions for digital rights protection in both Greek and European level attracted the interest of champion digital rights organizations.

We warmly thank all our members for their contribution to our work during these years!

D3, the first Portuguese digital rights organization, also became full member on the same day.

Homo Digitalis speaks to ΕΘΝΟΣ about COVID-19 and related contact tracing apps

The President of Homo Digitalis gave an interview to journalist Mary Tsinu from ΕΘΝΟΣ about contact tracing apps, COVID-19, and the challenges that arise for human rights in the digital age.

100+ organizations in a joint statement for COVID-19

On April 2, more than 100 civil society organizations, including Homo Digitalis, signed a joint statement for privacy issues related to the COVID-19 pandemic.

The organizations include among others Access Now, Amnesty International, Human Rights Watch, Privacy International, European Digital Rights (EDRi) and Homo Digitalis.

The statement is available here.

Homo Digitalis signs the ΕΑΙD Declaration

On 29 March, Homo Digitalis signed the European Academy for Freedom of Information and Data Protection (ΕΑΙD) Declaration on personal data protection and freedom of information during the COVD-19 pandemic.

The letter calls all of us to stay vigilant so that the encounter of COVID-19 measures does not violate human rights. The civil rights and liberties are inherent to modern democracies and should be protected even under the serious pandemic we are facing.

Many prominent scientists and human rights advocates sign the declaration.

The declaration is available here.

Joint Letter to the Council of the EU on TERREG

On March 27, European Digital Rights (EDRi) and many more civil society organizations, including Homo Digitalis, sent a joint letter to the Council of the EU regarding the suggested EU Terrorist Content Regulation (TERREG).

In the letter, we note our grave concerns regarding the suggested text, as well as privacy, freedom of expression and freedom of information concerns.

You may read the letter in the EDRi website or here.

Request for the Greek DPA's opinion on the Greek Police Agreement on Smart Policing

Today, 19.03.2020, Homo Digitalis filed a request to the President of the Greek DPA for the issue of an opinion regarding the agreement for the provision of smart policing systems between the Greek Police and INTRACOM TELECOM

This agreement provides for the processing of citizens’ biometric data (fingerprints and photos) through portable devices, among others.

It is essential that the Greek DPA provides its opinion on the issue, taking into account the important privacy challenges for all data subjects in Greece.

The full text of our request in Greek is available here.

Homomorphic Encryption: What is and Known Applications

Written by Anastasios Arampatzis

Every day, organizations handle a lot of sensitive information, such as personal identifiable information (PII) and financial data, that needs to be encrypted both when it is stored (data at rest) and when it is being transmitted (data in transit). Although modern encryption algorithms are virtually unbreakable, at least until the coming of quantum computing, because they require too much processing power too break them that makes the whole process too costly and time-consuming to be feasible, it is also impossible to process the data without first decrypting it. And decrypting data, makes it vulnerable to hackers.

The problem with encrypting data is that sooner or later, you have to decrypt it. You can keep your cloud files cryptographically scrambled using a secret key, but as soon as you want to actually do something with those files, anything from editing a word document or querying a database of financial data, you have to unlock the data and leave it vulnerable. Homomorphic encryption, an advancement in the science of cryptography, could change that.

What is Homomorphic Encryption?

The purpose of homomorphic encryption is to allow computation on encrypted data. Thus data can remain confidential while it is processed, enabling useful tasks to be accomplished with data residing in untrusted environments. In a world of distributed computation and heterogeneous networking this is a hugely valuable capability.

A homomorphic cryptosystem is like other forms of public encryption in that it uses a public key to encrypt data and allows only the individual with the matching private key to access its unencrypted data. However, what sets it apart from other forms of encryption is that it uses an algebraic system to allow you or others to perform a variety of computations (or operations) on the encrypted data.

In mathematics, homomorphic describes the transformation of one data set into another while preserving relationships between elements in both sets. The term is derived from the Greek words for “same structure.” Because the data in a homomorphic encryption scheme retains the same structure, identical mathematical operations, whether they are performed on encrypted or decrypted data, will result in equivalent results.

Finding a general method for computing on encrypted datahad been a goal in cryptography since it was proposed in 1978 by Rivest, Adleman and Dertouzos. Interest in this topic is due to its numerous applications in the real world. The development of fully homomorphic encryption is a revolutionary advance, greatly extending the scope of the computations which can be applied to process encrypted data homomorphically. Since Craig Gentry published his idea in 2009, there has been huge interest in the area, with regard to improving the schemes, implementing them and applying them.

Types of Homomorphic Encryption

There are three types of homomorphic encryption. The primary difference between them is related to the types and frequency of mathematical operations that can be performed on the ciphertext. The three types of homomorphic encryption are:

- Partially Homomorphic Encryption

- Somewhat Homomorphic Encryption

- Fully Homomorphic Encryption

Partially homomorphic encryption (PHE) allows only select mathematical functions to be performed on encrypted values. This means that only one operation, either addition or multiplication, can be performed an unlimited number of times on the ciphertext. Partially homomorphic encryption with multiplicative operations is the foundation for RSA encryption, which is commonly used in establishing secure connections through SSL/TLS.

A somewhat homomorphic encryption (SHE) scheme is one that supports select operation (either addition or multiplication) up to a certain complexity, but these operations can only be performed a set number of times.

Fully Homomorphic Encryption

Fully homomorphic encryption (FHE), while still in the development stage, has a lot of potential for making functionality consistent with privacy by helping to keep information secure and accessible at the same time. It was developed from the somewhat homomorphic encryption scheme, FHE is capable of using both addition and multiplication, any number of times and makes secure multi-party computation more efficient. Unlike other forms of homomorphic encryption, it can handle arbitrary computations on your ciphertexts.

The goal behind fully homomorphic encryption is to allow anyone to use encrypted data to perform useful operations without access to the encryption key. In particular, this concept has applications for improving cloud computing security. If you want to store encrypted, sensitive data in the cloud but don’t want to run the risk of a hacker breaking in your cloud account, it provides you with a way to pull, search, and manipulate your data without having to allow the cloud provider access to your data.

Applications of Fully Homomorphic Encryption

Craig Gentry mentioned in his graduation thesis that “Fully homomorphic encryption has numerous applications. For example, it enables private queries to a search engine – the user submits an encrypted query and the search engine computes a succinct encrypted answer without ever looking at the query in the clear. It also enables searching on encrypted data – a user stores encrypted files on a remote file server and can later have the server retrieve only files that (when decrypted) satisfy some boolean constraint, even though the server cannot decrypt the files on its own. More broadly, fully homomorphic encryption improves the efficiency of secure multi party computation.”

Researchers have already identified several practical applications of FHE, some of which are discussed herein:

- Securing Data Stored in the Cloud. Using homomorphic encryption, you can secure the data that you store in the cloud while also retaining the ability to calculate and search ciphered information that you can later decrypt without compromising the integrity of the data as a whole.

- Enabling Data Analytics in Regulated Industries. Homomorphic encryption allows data to be encrypted and outsourced to commercial cloud environments for research and data-sharing purposes while protecting user or patient data privacy. It can be used for businesses and organizations across a variety of industries including financial services, retail, information technology, and healthcare to allow people to use data without seeing its unencrypted values. Examples include predictive analysis of medical data without putting data privacy at risk, preserving customer privacy in personalized advertising, financial privacy for functions like stock price prediction algorithms, and forensic image recognition.

- Improving Election Security and Transparency. Researchers are working on how to use homomorphic encryption to make democratic elections more secure and transparent. For example, the Paillier encryption scheme, which uses addition operations, would be best suited for voting-related applications because it allows users to add up various values in an unbiased way while keeping their values private. This technology could not only protect data from manipulation, it could allow it to be independently verified by authorized third parties

Limitations of Fully Homomorphic Encryption

There are currently two known limitations of FHE. The first limitation is support for multiple users. Suppose there are many users of the same system (which relies on an internal database that is used in computations), and who wish to protect their personal data from the provider. One solution would be for the provider to have a separate database for every user, encrypted under that user’s public key. If this database is very large and there are many users, this would quickly become infeasible.

Next, there are limitations for applications that involve running very large and complex algorithms homomorphically. All fully homomorphic encryption schemes today have a large computational overhead, which describes the ratio of computation time in the encrypted version versus computation time in the clear. Although polynomial in size, this overhead tends to be a rather large polynomial, which increases runtimes substantially and makes homomorphic computation of complex functions impractical.

Implementations of Fully Homomorphic Encryption

Some of the world’s largest technology companies have initiated programs to advance homomorphic encryption to make it more universally available and user-friendly.

Microsoft, for instance, has created SEAL (Simple Encrypted Arithmetic Library), a set of encryption libraries that allow computations to be performed directly on encrypted data. Powered by open-source homomorphic encryption technology, Microsoft’s SEAL team is partnering with companies like IXUP to build end-to-end encrypted data storage and computation services. Companies can use SEAL to create platforms to perform data analytics on information while it’s still encrypted, and the owners of the data never have to share their encryption key with anyone else. The goal, Microsoft says, is to “put our library in the hands of every developer, so we can work together for more secure, private, and trustworthy computing.”

Google also announced its backing for homomorphic encryption by unveiling its open-source cryptographic tool, Private Join and Compute. Google’s tool is focused on analyzing data in its encrypted form, with only the insights derived from the analysis visible, and not the underlying data itself.

Finally, with the goal of making homomorphic encryption widespread, IBM released its first version of its HElib C++ library in 2016, but it reportedly “ran 100 trillion times slower than plaintext operations.” Since that time, IBM has continued working to combat this issue and have come up with a version that is 75 times faster, but it is still lagging behind plaintext operations.

Conclusion

In an era when the focus on privacy is increased, mostly because of regulations such as GDPR, the concept of homomorphic encryption is one with a lot of promise for real-world applications across a variety of industries. The opportunities arising from homomorphic encryption are almost endless. And perhaps one of the most exciting aspects is how it combines the need to protect privacy with the need to provide more detailed analysis. Homomorphic encryption has transformed an Achilles heel into a gift from the gods.

This article was originally published on the Venafi blog at: https://www.venafi.com/blog/homomorphic-encryption-what-it-and-how-it-used.