Homo Digitalis proposal on the Draft Law on Personal Data

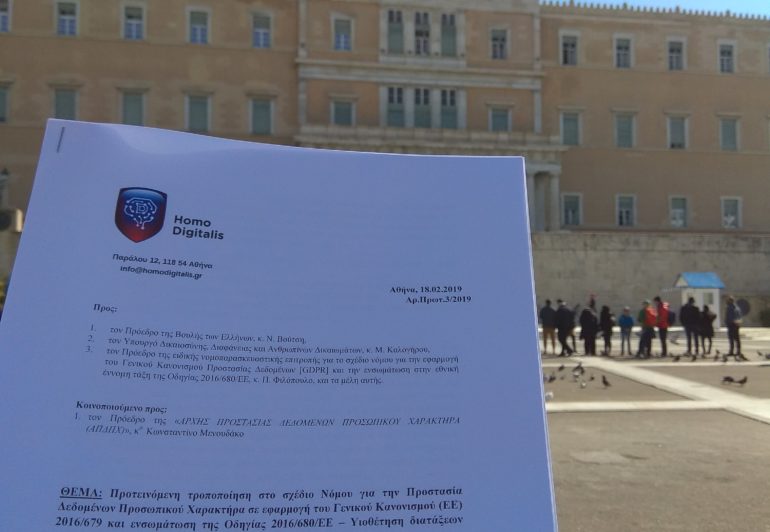

On 18 February 2019, Homo Digitalis submitted a proposal for an amendment to the Draft Law on Personal Data Protection, implementing the General Regulation (EU) 2016/679 and incorporating Directive 2016/680/EU.

Specifically, Homo Digitalis proposed the inclusion on the Draft Law of the provision article 80, paragraph 2 of the GDPR. The provision provides that the described non-profit bodies regardless of any conferment by the data subject, meaning without his command, could:

-have the right to lodge a complaint with the supervisory authority (DPA in Greece)

-have the right to effective judicial redress against a legally binding decision of the supervisory authority (DPA in Greece)

-and have the right to effective judicial remedy against a controller or processor.

We consider that the adoption of the provision of GDPR Article 80, paragraph 2 is particularly important for non-profit bodies in Greece, as Homo Digitalis, to act as enforcement bodies and guards for the strict implementation of the law for personal data and to defend the rights of the data subject. The financial crisis that plagues Greek society in recent years, makes it particularly difficult and unsustainable for citizens to bear the cost for claiming their rights. Therefore, the paramount protection for data subject’s rights from abuses of natural and legal persons will be achieved through the establishment of rules that enable, under the EU legislator’s recommendations, non-profit bodies to act independently and without being subject to the need for relating assignments and mandates.

It is recalled that Homo Digitalis had submitted an open proposal on 20 April 2018, addressed to all Members of the Greek Parliament.

The proposal was notified to the President of the special regulatory Committee for the draft law on the implementation of the General Data Protection Regulation (GDPR) and the incorporation into the national law of Directive 2016/680/EU, Mr. P. Filopoulos, and the members of the committee, to the President of Greek Parliament, Mr. N.Voutsis, and to the Minister of Justice, Transparency and Human Rights, Mr. M.Kalogirou.

We are very optimistic that the proposal of Homo Digitalis will be seriously taken into consideration and the provision of paragraph 80, paragraph 2 will be incorporated in the final draft law.

You can read the proposal of Homo Digitalis in Greek here.

Social Engineering as a threat to Society

Written by Anastasios Arampatzis*

Social Engineering is defined as the psychological manipulation of human behaviour into people performing actions or divulging confidential information. It is a technique, which exploits our cognitive biases and our basic instincts, such as confidence, for the purpose of information gathering, fraud or system access. Social engineering is the “favourite” tool of cyber criminals and is now primarily used through social networking platforms.

Social Engineering in the context of cyber-security

The conduct of the staff has a significant impact on the level of an organisation’s cyber-security, that by extension means that social engineering is a major threat.

The way we train our staff in cyber-security, affects the cuber-security of our organisation, as such. Recognising staff’s cultural background of our company and planning their training in such a way that responds to various cognitive biases can aid to the establishment of an effective information’s security. The ultimate objective should be the development of a cyber-security culture within the meaning of attitude, notion, cognition and behaviour that contribute to protect sensitive and relevant information of an agency. A substantial part of cyber-security culture is the risk awareness of social engineering. If the officials do not consider themselves as part of this effort, then they disregard the security interest of the organisation.

Cognitive exploitation

The various techniques of social engineering are based on specific characteristics of the human decision-making process, which are known as cognitive biases. These biases are derivatives of the brain and the procedure of finding the easiest way possible to process information and take decisions in a swift. For example, a characteristic feature is the representativeness, the trend namely, to group related items or events. Each time we see a car, we do not have to remember the manufacturer or the colour. Our mind sees the object, the shape, the movement and indicates that this is a “car”. Social engineers exploit this characteristic through sending phishing messages. We receive a message with the logo of Amazon and we do not check if it is false or not. Our mind says that this is coming from Amazon, that we trust it and so we click the link and we give away our personal data, as our card number. Similar attacks aim to interception of confidential information for the staff, as i.e. manipulation, fraud by phone. If any person is not adequately trained to face such attacks, he will not even understand their existence.

Principles of Influence

Social engineering is largely based on the six principles of influence, as outlined in the book of Robert Cialdini “Influence: The Psychology of Persuasion” which briefly are:

- Reciprocity: obligation to give when you receive

- Consistency: looking for and asking for commitments that can be made

- Consensus: people will look to the actions of others to determine their own

- Authority: people will follow credible knowledgeable experts

- Liking: people prefer to say yes to those that they like

- Scarcity: people want more of those things there are less of

The scandal of Cambridge Analytica

After the election of the President Trump many media were discussing the possibility that social engineering strategies might have been used to influence public opinion. Revelations for Cambridge Analytica and the data’s use of users of Facebook does not only raise doubts as to data’s privacy and the lack of user’s consensus, but demonstrates the ease with which companies can plan and raise social-engineering campaigns against a whole society.

As for commercial advertisements, it is very important to know your target group, in order to reach your goal with the less possible effort. This is true for every influential campaign and what the scandal of Cambridge Analytica proved is that social engineering is not only a threat to cyber-security of a company or an agency.

Social engineering is a threat to political stability and the free and independent political dialogue. The advertising techniques used in social networking platforms raise many ethical dilemmas. Political manipulation and spreading misinformation and disinformation largely alleviate the existing moral issues.

The threat to Societies

Is it possible for social engineering to trigger a war or social unrest? Is it possible for foreigners to deceive citizens of a state in order to vote against their national interest? If a head of a State (I will not use the word leader) wants to manipulate his/her State’s citizens, can he/she succeed it? The answer to all these questions is yes. Social engineering through digital platforms, which have invaded every social structure is a very serious threat.

The fundamental idea of democracy is that the power is vested in the people and exercised directly by them. Citizens can express their opinions through an open, protected and free dialogue. Accountability, especially of government officials, but also individuals, is equally an important principle of democracy. Through the mass collection and exploitation of personal data with no accountability, these principles are endangered.

However, at this point it should be noted that it is not only social networking platforms to blame, such as Facebook, for any disinformation campaign or political manipulation. These platforms actually reflect our actions. We create our own sterile world, our “cycle of trust”. Therefore, the threat is not the means by themselves, even if they have a share of responsibility in their way of collecting data and advertising practices. The real threat are the devious ones and how they exploit these platforms.

Large-scale campaigns of social engineering, which are taking advantage of human trust, contaminate public dialogue with misinformation and distort reality and can pull societies back from the brink. The truth is doubted more than ever and political polarisation is increased. Spreading news on social media with no accountability leads to political distortion, lack of confidence in the political system and the election of extreme political parties. In brief, social engineering is a serious threat to social and political stability.

Response to the threat

The key to tackling social engineering, considering that tactics are aiming to lack of knowledge, to our unawareness and our prejudices, is awareness. The approach of raising awareness has dual effect: on the one hand we can develop strategies and good practices to confront social engineering as such, on the other hand we can develop policies to reduce the results of social engineering.

In contrast to what is happening in responding to malicious software, in order to address social engineering we cannot just “install” some kind of software to humans in order to stay safe. As Christopher Hadnagy notes in his book “Social Engineering, The Art of Human Hacking”, social engineering requires an holistic, people-focused approach, which will be focused on the following axes:

- Learning to recognise social engineering attacks

- Creation of a personalised program on cyber-security awareness

- Consciousness of the value of information searched by social engineers

- Constantly updated software

- Exercises through a simulation software and “serious” games (gamification)

Confrontation of social engineering should become part of a wider training of our digital security. To combat social engineering on a society level we should be trained for the vulnerability of modern means of communication (i.e. social media), for the reasons, why they can be used for people’s manipulation (i.e personalised advertising, political communication) and for the ways in which they are manipulated (i.e. fake news). Awareness is the key to develop critical thinking against social engineering.

*Anastasios Arampatzis is member of Homo Digitalis, demobilised Officer of Air Force with more than 25 years experience in relevant aspects of security of information. During his time in the Office of Air Force, he was certified NATO evaluator in cyber-security cases and has been honoured for his knowledge and his efficiency. Nowadays, he is a columnist on State of Security of Tripwire firm and for the blog of Venafi. His articles have been published in many well-respected websites.